Google unveils new AI chips to rival Nvidia: Here is what they offer

Google has introduced two new chips to meet increasingly demanding AI workload

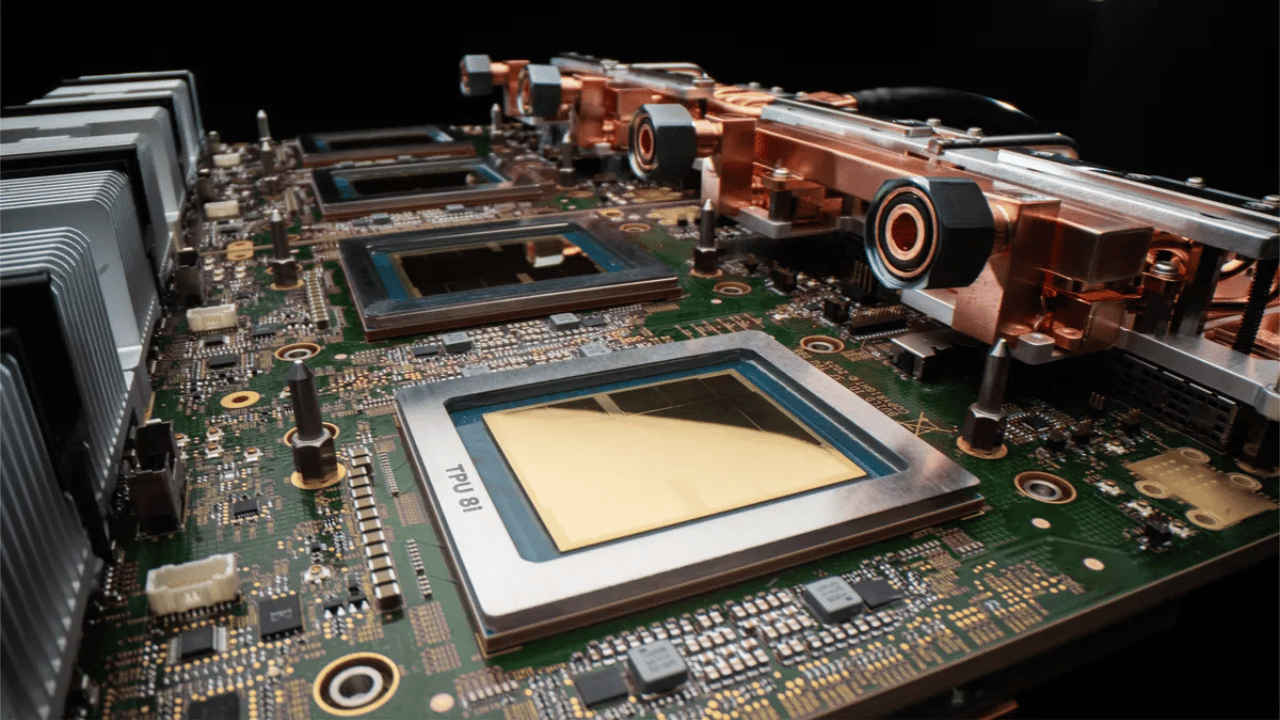

The new chips are TPU 8t and TPU 8i.

Both chips run on Google’s Axion ARM-based CPU host.

Google has introduced two new chips to meet increasingly demanding AI workloads. The company has announced its eighth-generation Tensor Processing Units (TPUs), designed to power its custom-built supercomputers. The new chips are TPU 8t and TPU 8i, with each built for a specific purpose. While one focuses on training powerful AI models faster, the other is designed to deliver quick and efficient responses.

Survey

SurveyBoth chips run on Google’s Axion ARM-based CPU host and are supported by advanced liquid cooling technology, helping improve performance while keeping energy use in check. According to Google, ‘both chips can run various workloads, but specialisation unlocks significant efficiencies and gains.’ ‘Along with our full-stack purpose-built infrastructure- from networking to data centres and energy-efficient operations- they create the underlying engine that will allow us to bring highly responsive agentic AI to the masses.’

Also read: Tim Cook reveals biggest mistake and proudest moment at Apple

Google TPU 8t

Google describes TPU 8t as a ‘training powerhouse.’ TPU 8t is designed mainly for training large AI models, which usually takes a lot of time and computing power. Google claims this chip can significantly speed up that process, helping reduce development cycles from months to just weeks.

The company says TPU 8t delivers nearly three times the compute performance compared to the previous generation.

Also read: ChatGPT Images 2.0 is here with improved photorealism, better Hindi text rendering and more

Google TPU 8i

Google describes TPU 8i as a ‘reasoning engine.’ According to the tech giant, this chip is built to handle the intricate, collaborative, iterative work of many specialised agents. ‘TPU 8i is designed with more memory bandwidth to serve the most latency-sensitive inference workloads, which is critical because interactions between agents at scale magnify even small inefficiencies,’ Google explained.

Also read: Anthropic investigates alleged unauthorised access to its Mythos AI model: Here is what happened

Ayushi works as Chief Copy Editor at Digit, covering everything from breaking tech news to in-depth smartphone reviews. Prior to Digit, she was part of the editorial team at IANS. View Full Profile