Microsoft’s new image generation model MAI-Image-2: How it stacks up against Gemini and ChatGPT

What do you get when you put three AI image generation models in a room and ask them to draw an impossible library where gravity doesn’t work? Apparently, three very different ideas of what impossible means. More on that later.

Survey

SurveyI spent an evening running five brutally specific prompts through ChatGPT Images, Gemini’s Nano Banana Pro, and Microsoft’s newly released MAI-Image-2. Not the usual “Turn this image into Ghibli-style art”, but prompts with real technical requirements, correct physics, functional information design, and creative ambition. The goal was just to find out where Microsoft’s new model actually sits in the pecking order. The short answer is third place. The longer answer is much more interesting.

Also read: Every engineer will have 100 AI agents: Jensen Huang on future of work

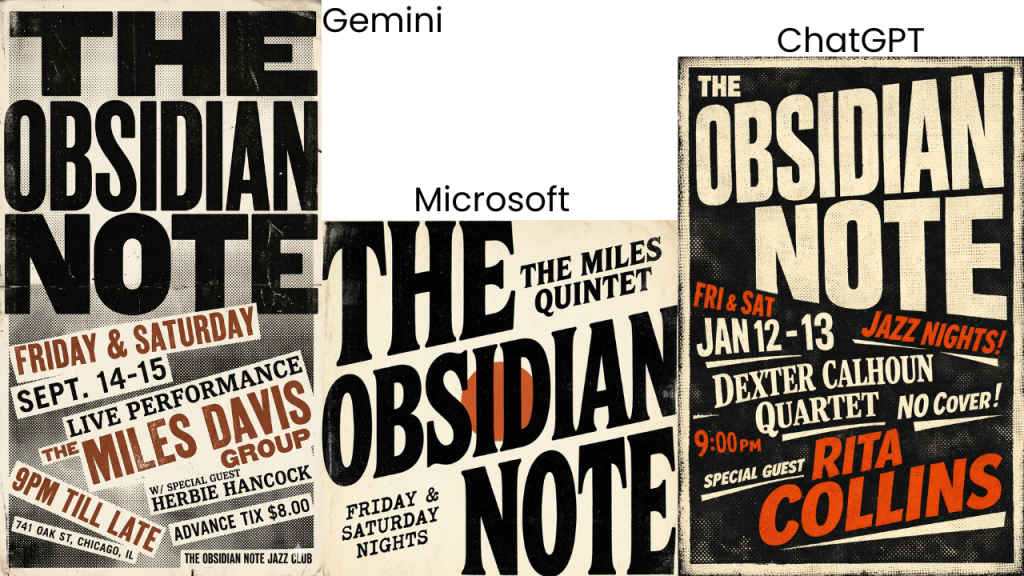

Typography

Prompt: A brutalist music poster for a fictional 1970s jazz club called “The Obsidian Note.” Dominant typography with the club name in a massive, compressed grotesque typeface bleeding off all four edges. Supporting text – dates, performer names – set in mismatched serif and monospaced fonts at chaotic angles. Color palette: raw black, dirty cream, and a single accent of burnt sienna. Halftone texture overlaid on the background. Letterpress print aesthetic. Ultra-high-resolution flat design.

ChatGPT won this cleanly. Its brutalist jazz poster – dark background, compressed grotesque type bleeding off every edge, burnt sienna used in exactly two places as an accent – was the only one that understood “brutalist” as an aesthetic directive rather than a vibe. It even invented fictional performer names, which is the correct move for a fictional venue brief.

Gemini made a genuinely strong poster but with real artists. Miles Davis and Herbie Hancock showed up, along with a real Chicago address. Beautiful work, wrong brief.

MAI-Image-2 made something tasteful and pleasant. Light background, clean serif type, vintage jazz warmth. Which would be great if the prompt had asked for tasteful and pleasant. It didn’t. The prompt asked for chaos, and MAI-Image-2 flinched.

Optical physics

Prompt: A hyper-realistic macro photograph of a perfectly spherical water droplet resting on a dark obsidian surface. The droplet acts as a refracting lens, projecting an inverted, full-color caustic image of a sunlit forest canopy beneath it. Visible light dispersion creates prismatic rainbow fringes along the droplet’s equator. Shallow depth of field with razor-sharp focus on the caustic projection. Shot on a Phase One camera, f/2.8, diffused studio lighting. Photorealistic, scientifically accurate optics.

One thing I noticed is that they all have the same blind spots. Every single model rendered a decorative crystal ball instead of a water droplet, because crystal ball refraction photos vastly outnumber true macro droplet shots in training data. When in doubt, all three models defaulted to the more familiar visual. That’s a shared failure worth noting.

ChatGPT won on execution. Moody studio lighting, a strong prismatic rainbow band at the sphere’s equator, and a caustic light pool projected onto the surface below. That last detail matters because it shows the model understands light bends through the object. Gemini made the most beautiful image of the three and finished last, because it completely ignored the dark environment spec and gave me a sunlit forest scene. It was stunning but the wrong photograph.

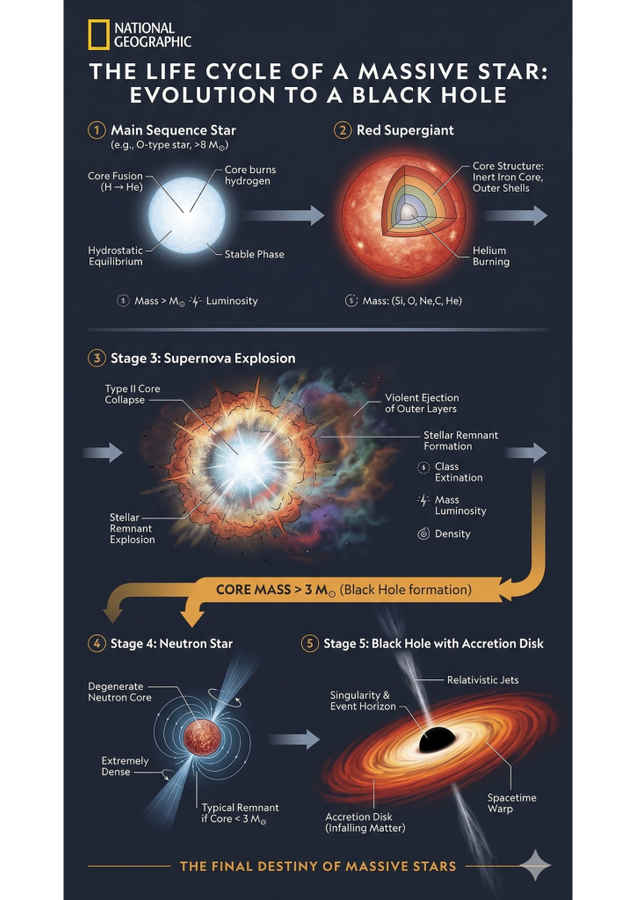

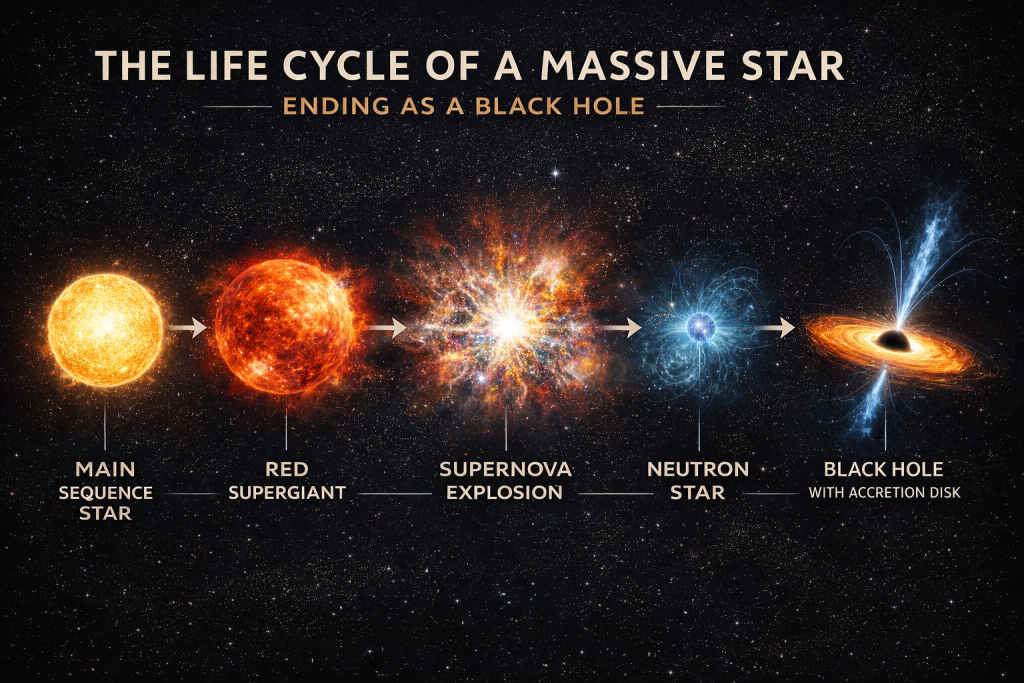

Infographic design

Prompt: A clean, editorial-style educational infographic poster illustrating the life cycle of a massive star ending as a black hole. Five clearly labeled stages in a horizontal flow: main sequence star to red supergiant to supernova explosion to neutron star to black hole with accretion disk. Each stage rendered as a crisp scientific illustration. Sans-serif labels, concise annotation lines, and a muted dark-navy background with white and amber type. Style of a National Geographic explainer. Information hierarchy is clear at a glance.

Gemini’s strongest round by a mile. Its stellar evolution infographic was the only output that understood “infographic” as a distinct genre from “illustration.” Real readable text, cross-section cutaways showing stellar interiors, scientifically accurate branching paths, precise labels. It even put a National Geographic masthead on it. Publication-ready.

ChatGPT made something gorgeous, the scale of each stellar stage rendered beautifully, and almost no explanatory text. High art, low information density. A poster disguised as an infographic.

MAI-Image-2’s result was the most alarming output of the entire test. Every annotation block was complete gibberish with characters that contain zero information. It had learned what infographics look like without understanding what they’re for.

Also read: Microsoft unveils MAI Image 2 with better photorealism and text generation: How to use it

Extreme macro

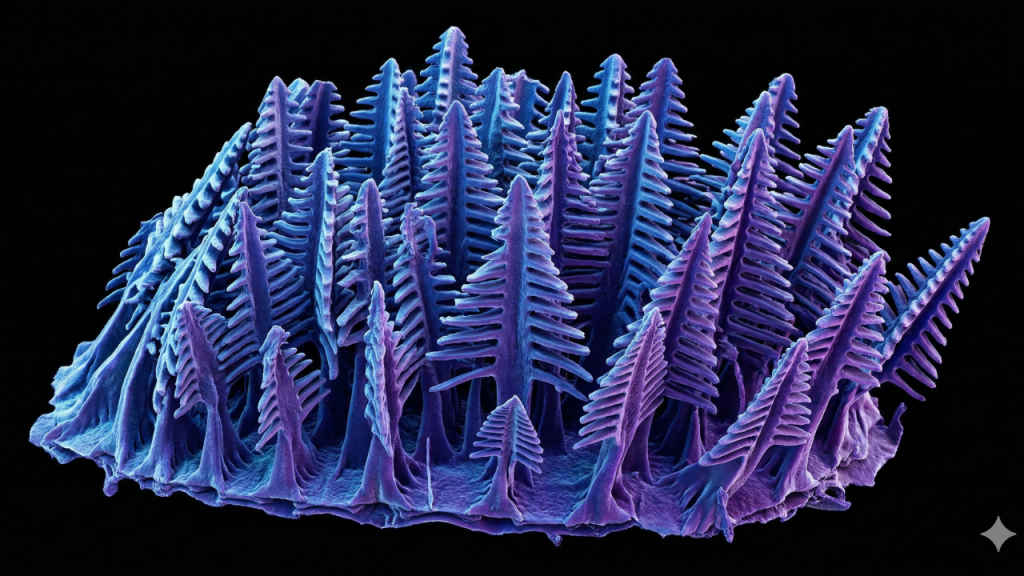

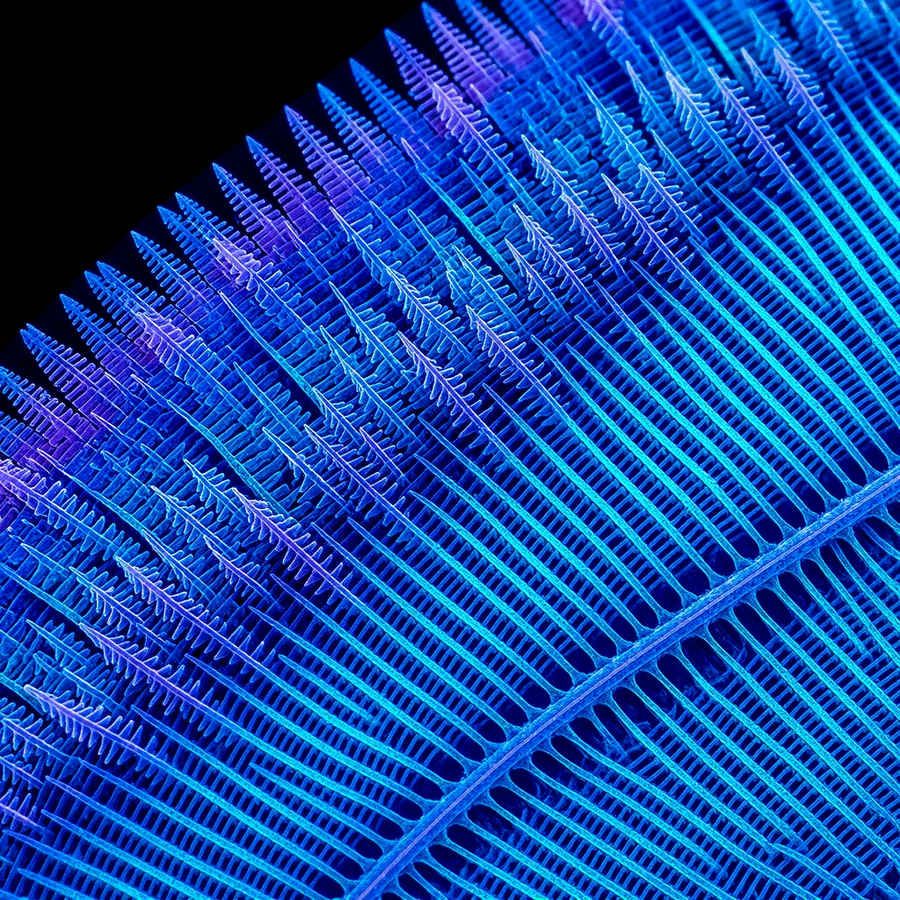

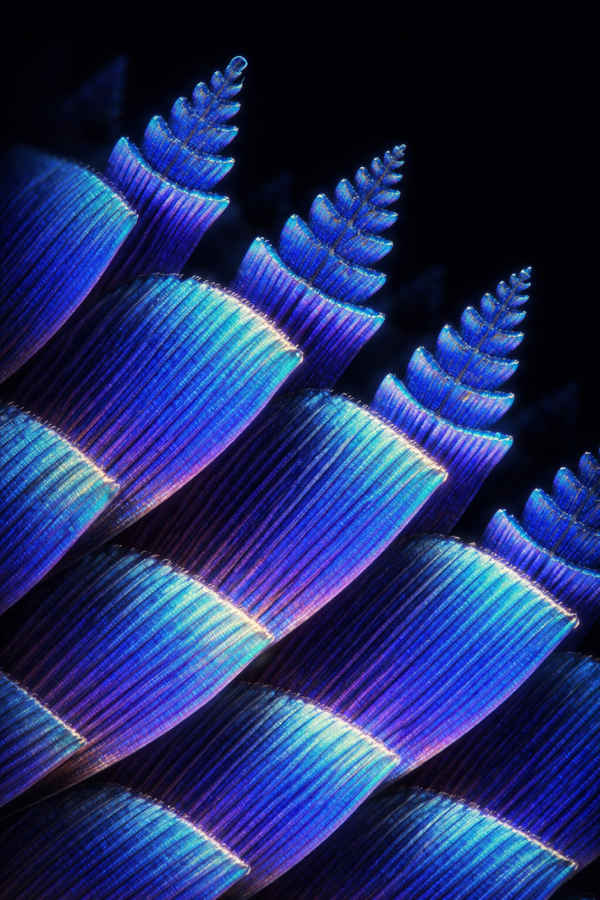

Prompt: Extreme macro photography at 25:1 magnification of a single iridescent scale from a Morpho butterfly wing. The microscopic nanostructures – a forest of Christmas-tree-shaped lamellae – are visible as physical 3D architecture, diffracting light into shifting electric blues and violets. Lit with a single off-axis fiber optic source casting long micro-shadows that reveal depth. Captured on a scientific scanning electron microscope rendered in photorealistic color. Background falls to pure black. Tack sharp across the entire frame.

The Morpho butterfly nanostructure is one of the most photographed objects in scientific literature. The correct geometry, flat horizontal shelf-like ridges stacked like a microscopic Venetian blind, is well-documented. A model that’s seen real SEM images should know this.

Gemini did. Its output correctly depicted the Christmas-tree lamellae in SEM false-colour, with the right matte texture and depth. It has clearly trained on actual scientific publications.

MAI-Image-2 surprised me here. Its top-down view showed extraordinary sub-structure detail, with a convincing iridescent colour shift. It wasn’t perfect as the angle missed the 3D depth and the geometry leaned more bird feather than butterfly scale but it was impressive.

ChatGPT invented something magnificent and completely wrong. Towering columns with organic fern crowns, breathtaking alien biology that bears no resemblance to real Morpho scales. ChatGPT’s core failure seems to be that when beauty and accuracy conflict, beauty wins.

The impossible library

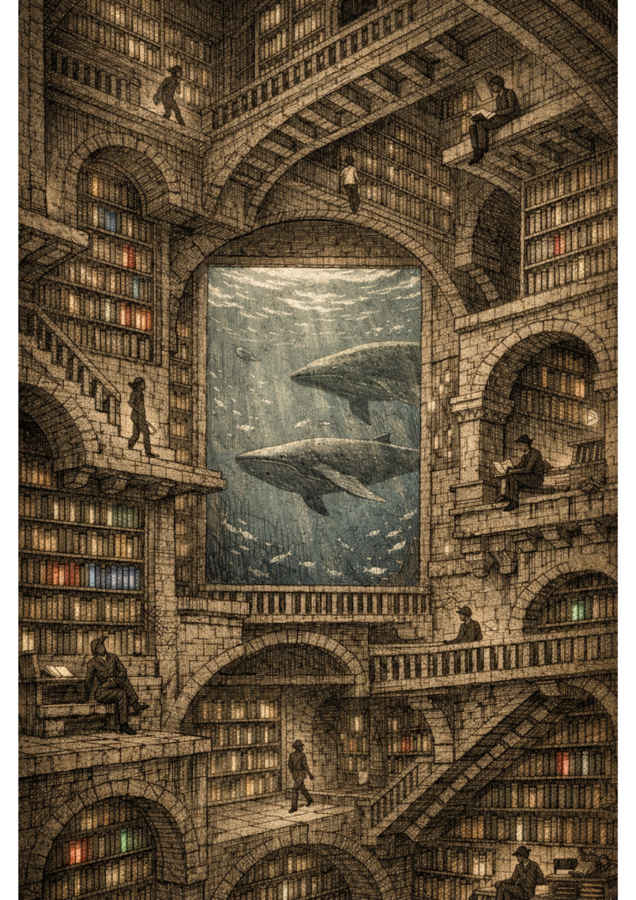

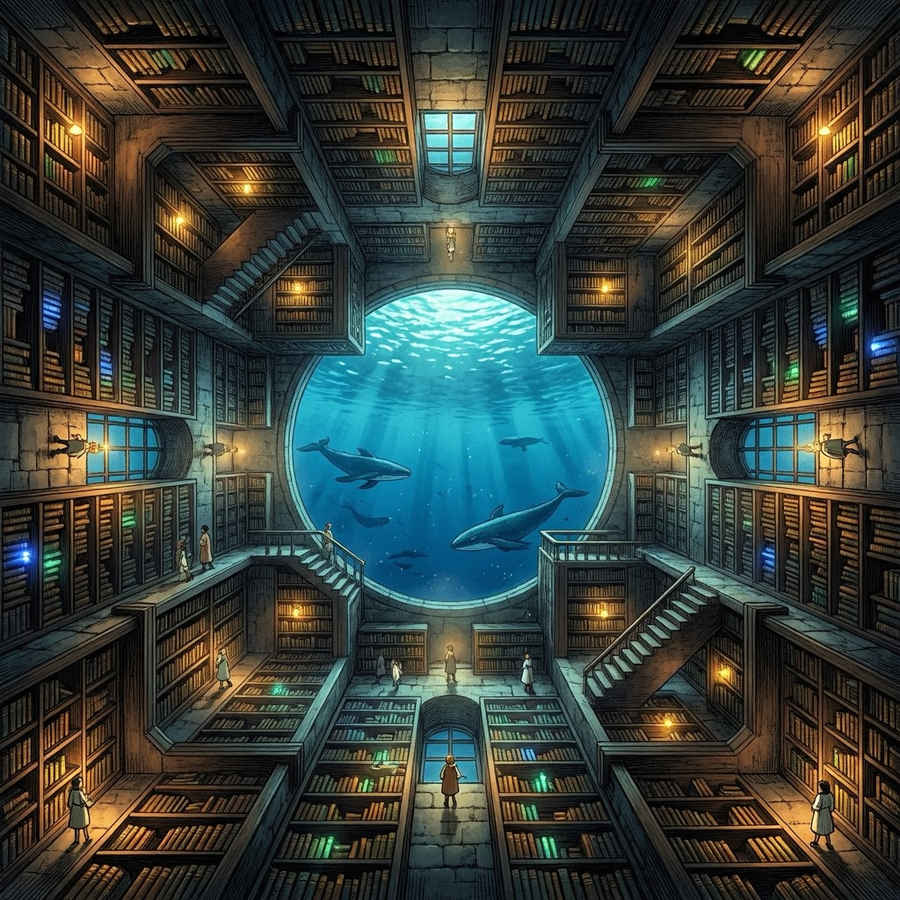

Prompt: A vast impossible library where gravity rotates 90 degrees every 50 meters, so bookshelves, staircases, and reading nooks cover all six faces of every room simultaneously. Tiny human figures walk on walls and ceilings, completely at ease. Books are written in their own light, glowing softly from their spines in colors that correspond to their genre. One enormous window at the center reveals not sky, but the underside of an ocean, with whales drifting past. Painted in the style of a Piranesi etching crossed with a moody, warm and wondrous background.

Yes, I was watching Interstellar when the idea for this image struck my mind. Gemini won this one on pure atmosphere, warm amber light against a cold blue whale window, glowing rainbow book spines, figures everywhere doing library things, rich architectural detail that rewards close looking.

ChatGPT nailed the Piranesi etching half of the brief, cross-hatched stonework, figures on impossible staircases including upside-down, the whale window as a monumental architectural frame. Missed the glowing books entirely but was otherwise stunning.

MAI-Image-2 gave me a charming anime library with a nice aquarium window. Books on the ceiling, people emphatically not on the ceiling. It understood the elements but didn’t commit to the physics so it was a little too safe for me.

Verdict

Gemini’s three wins came in rounds 3, 4, and 5, those being infographic design, macro science, and the impossible library. That’s two technically demanding prompts and one that was almost entirely subjective. ChatGPT’s two wins came in rounds 1 and 2, those being typography and optical physics. One is very stylistic and the other majorly technical so there’s no clean pattern. These models don’t sort neatly into “the accurate one” and “the beautiful one.” Reality is messier than that.

Gemini has a habit of rewriting the mood of a prompt rather than following it. Give it a brief with a specific environment and it might instead hand you the more beautiful version of the idea it extracted. When that instinct works, as it did in the infographic round and the impossible library, the results are extraordinary. When it doesn’t, you get the most gorgeous wrong answer in the room.

ChatGPT follows the mood faithfully but rewrites the facts when beauty is on the line. The butterfly scale prompt is the clearest example where it produced something breathtaking and biologically invented, trading scientific accuracy for visual drama.

MAI-Image-2 never won a round but got silver medals in the two most technically demanding prompts. Its consistent failure is that it plays it too safe, producing competent, pleasant outputs that rarely take risks. The infographic round is what holds it back a lot. It generated the visual structure of an infographic, complete with annotation lines and text blocks, filled entirely with gibberish..

For anyone deciding which model to reach for, it depends on what kind of wrong you can live with. None of them is flawless and they are all capable of producing something noteworthy.

Also read: Iran’s digital offensive: Cyber campaign will outlast physical one, says Tenable CSO

A journalist with a soft spot for tech, games, and things that go beep. While waiting for a delayed metro or rebooting his brain, you’ll find him solving Rubik’s Cubes, bingeing F1, or hunting for the next great snack. View Full Profile