Why is Meta building its own AI chips, and at what cost?

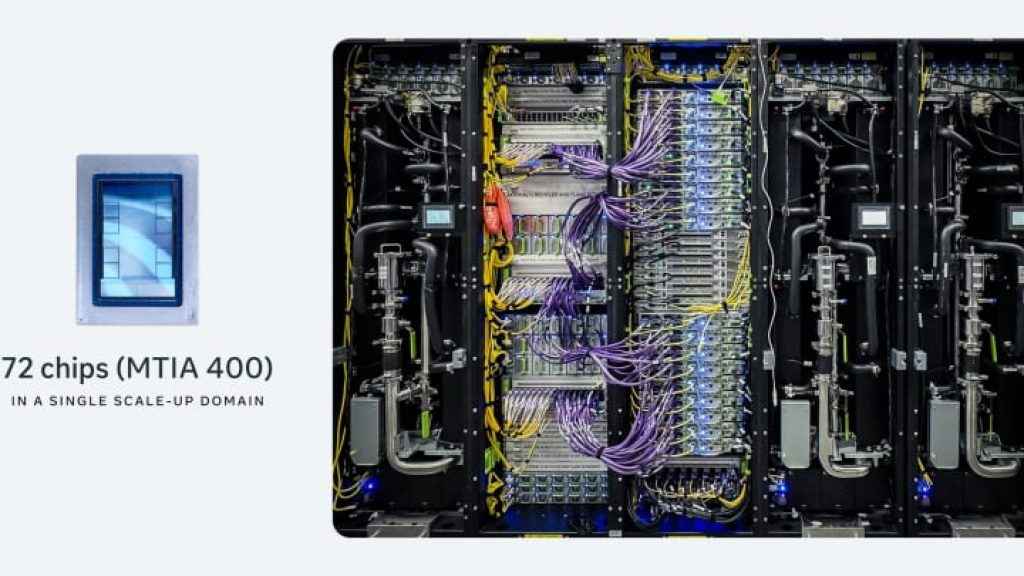

The timing is awkward, even by Silicon Valley standards. Last week, reports emerged that Meta had quietly killed its most ambitious AI training chip, codenamed Olympus. According to The Information, Meta scrapped the chip after struggling with its design, pivoting instead to a less complicated approach. Meta declined to comment. Then suddenly the company announced four new generations of its homegrown MTIA chips – 300, 400, 450, and 500 – and said nothing about any of it.

Survey

SurveyAlso read: Elon Musk’s crazy idea to turn Grok into an AI agent for your PC

The four chips it announced are all inference-focused, designed to run AI models cheaply at scale, not to train them. That’s a meaningful distinction. Training is where Nvidia’s stranglehold on the industry is strongest, and it’s the arena Meta just quietly retreated from.

The official rationale for building custom silicon is straightforward enough. Meta’s stated goal is to diversify its hardware sources, reduce reliance on outside chipmakers, and bring down costs amid a fast-moving and expensive AI race. Its MTIA inference chip reportedly reduced total cost of ownership by 40 to 44 percent across recommendation systems for Facebook and Instagram, real savings at a company serving billions of users daily. When you’re running AI at that scale, shaving small margins off for each inference request adds up astronomically.

Also read: Anthropic Institute wants to warn us on how AI is bad for human civilization

But the “at what cost” question has a literal answer that complicates the independence narrative. In January 2026, Meta announced a capital expenditure budget of between $115 billion and $135 billion for the year which is nearly double the previous year’s $72.2 billion, with the majority allocated to chips. And most of that is going straight to the companies Meta says it wants to depend on less. Within ten days in February, Meta signed a multi-year strategic partnership with Nvidia to deploy millions of Blackwell and next-generation GPUs, followed by a chip agreement with AMD worth between $60 billion and $100 billion.

There’s also the question of what “homegrown” actually means here. Meta’s MTIA chips are developed in close partnership with Broadcom – the same company that co-designs Google’s TPUs. The press release calls them Meta’s chips. The reality is considerably more collaborative.

Meta’s chip program has a history of setbacks. It scrapped an earlier inference chip after it underperformed in small-scale testing, and pivoted in 2022 to billions of dollars’ worth of Nvidia GPUs. Olympus is the just the latest addition to that pattern. Meta’s own Chief Product Officer once described the company’s chip journey as “a walk, crawl, run situation.” Right now, it still looks like a crawl – an expensive, ambitious, necessary one, but a crawl nonetheless.

Also read: Germany builds 25,000 sq ft “Robot Gym” to train hundreds of humanoid robots

A journalist with a soft spot for tech, games, and things that go beep. While waiting for a delayed metro or rebooting his brain, you’ll find him solving Rubik’s Cubes, bingeing F1, or hunting for the next great snack. View Full Profile