Claude Code’s Code Review explained: A multi-agent PR review system

I’ve been following Claude Code closely, and it’s already one of the most capable AI coding tools available. It doesn’t just autocomplete, it reasons through problems and works autonomously across complex codebases. So a big chunk of your task was just the review. That’s where the new Code Review feature comes in, and it could be the addition that might make Claude Code genuinely end-to-end for software development.

Survey

SurveyAlso read: Anthropic launches new tool to review AI-generated code: Check details

The problem it’s solving

Code review has become a bottleneck. As teams ship more code faster, pull requests pile up and reviewers are stretched thin. The result is that many PRs get a skim rather than a proper read and bugs slip through. Anthropic saw this internally: before Code Review, only 16% of their own PRs received substantive review comments.

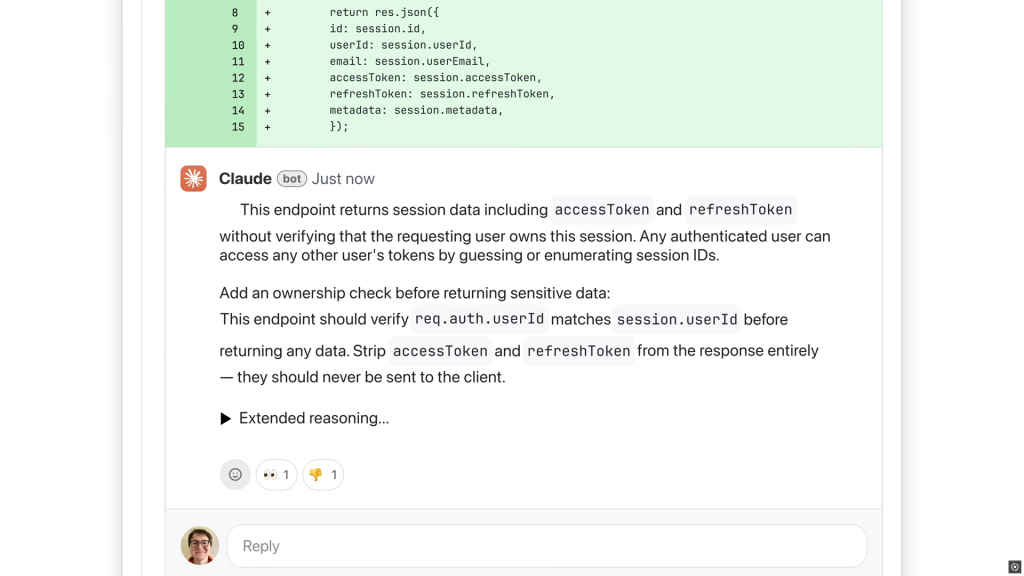

When a pull request is opened, Claude Code doesn’t just run a single pass over the diff. It dispatches a team of agents that work in parallel. Each agent looks for bugs independently, then findings are verified to filter out false positives, ranked by severity, and consolidated into a single overview comment on the PR, alongside inline comments for specific issues.

Also read: Build software for AI agents, not necessarily humans: Box CEO

This multi-agent approach is what separates it from lighter-weight automated review tools. Rather than one model making a single sweep, multiple agents cross-check each other’s findings before anything surfaces to the developer.

Reviews aren’t one-size-fits-all. Large or complex PRs get more agents assigned and a deeper analysis pass. Smaller, simpler changes get a lighter review. The average review takes around 20 minutes.

What the numbers show

Since Anthropic rolled this out internally, the proportion of PRs receiving substantive review comments jumped from 16% to 54%. On large PRs, those over 1,000 lines changed, 84% receive findings, averaging 7.5 issues flagged. Fewer than 1% of those findings are marked incorrect by engineers, which speaks to the low false-positive rate. Code Review is designed for depth, and that comes at a cost. Reviews are billed by token usage and average $15–25 per PR, scaling with size and complexity. Admins can set monthly organisation-wide caps, enable the feature per repository, and track usage through an analytics dashboard.

Code Review is currently available as a research preview in beta for Claude Code’s Team and Enterprise plans. Once an admin enables it and installs the GitHub App, reviews run automatically on every new PR, no configuration needed from developers.

Also read: SanDisk Extreme Fit USB-C Flash Drive: Practical, portable, and properly tiny

A journalist with a soft spot for tech, games, and things that go beep. While waiting for a delayed metro or rebooting his brain, you’ll find him solving Rubik’s Cubes, bingeing F1, or hunting for the next great snack. View Full Profile