19-year-old boy from Bihar develops a 5.82B multimodal AI: Here’s what it can do

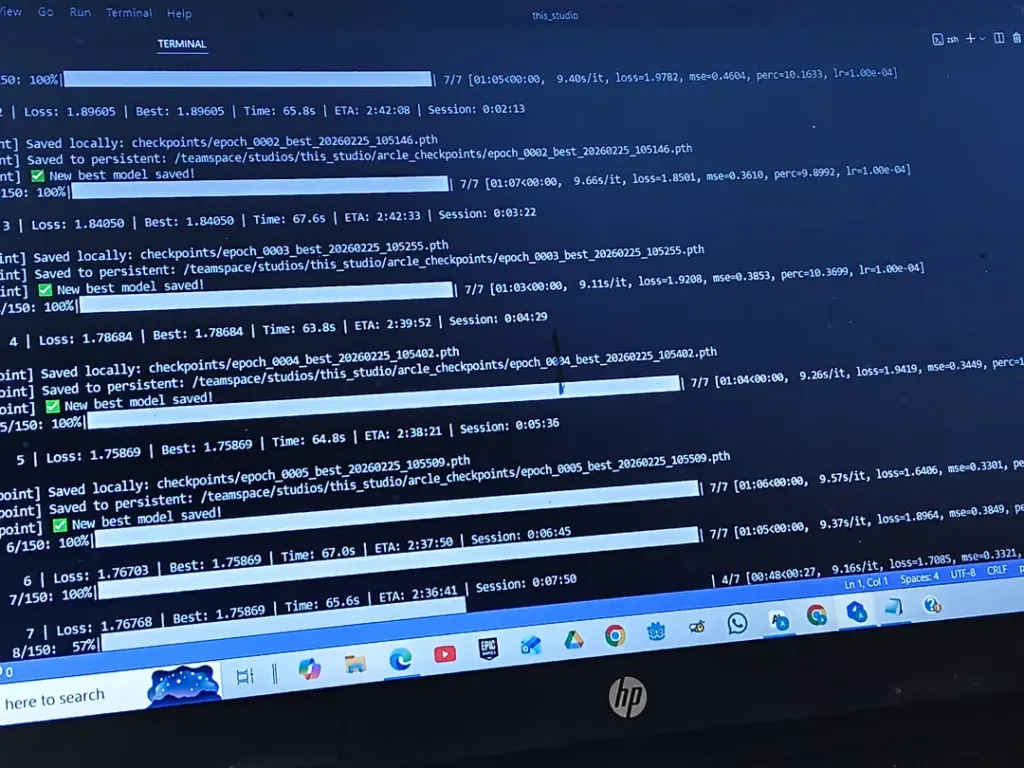

Abhinav Anand is 19 years old and is currently studying in class 12. He is from Bihar. He does not possess any education in Computer Science, nor does he have any investors or team members working for him. He claims that he has created a multimodal AI model with 5.82 billion parameters using his personal savings amounting to around Rs. 11 lakh.

Survey

SurveyAlso read: Anthropic says teaching Claude the why behind ethics works better than just training it to behave

This model, according to Anand, is capable of handling text, images, documents, audio, and videos. In terms of features, the model supports image generation in the resolution of 512×512 pixels, output of speech at 24 kHz, and context windows more than two million tokens. In privately conducted experiments, it scored 93.45 on the OmniDocBench v1.5 benchmark tool, which has not yet been verified by anyone else.

Anand made a public post regarding his achievement on Reddit, wherein he described how his journey began two and a half years ago when he had only heard of AI from the ChatGPT model. He talks about going through numerous unsuccessful attempts like creating a YouTube analytics software, building a voice assistant, and developing an offline AI assistant. Before the multimodal model, he also trained a text-to-video system on his laptop with no outside funding and later published it on Lightning AI.

Also read: Can religion help fix AI ethics or make it worse?

The funding narrative, too, is grittier than most other AI projects that you will come across. The cost of GPU compute alone amounted to roughly Rs 64,000 for him. Other sources included compute credits from RunPod, DigitalOcean, and Github Student Pack. “My father is a government officer. My mother is a housewife. I am from a middle-class family of Bihar state,” he stated. Now, he is seeking roughly $35,000 to complete training, after which he intends to publish the weights on Hugging Face and then finally release the entire codebase on GitHub.

Of course, the internet never simply clapped. On Reddit, while some users expressed genuine admiration, others were quick to raise doubts regarding his source of training data, pointing to a lack of verifiable public documentation in addition to charging the project with being heavily vibe-coded. “Just checked the source code. Purely vibe coded, nothing new.” Others mentioned how his pitch – Bihar teen, middle-class family, no degree – had ticked every box suspiciously well.

Still, even the skeptics largely acknowledged that attempting to train a large multimodal model independently, from Bihar, at 19, is not nothing. Whether ArcleIntelligence is what Anand says it is, India will need many more people willing to try.

Also read: India doesn’t just speak english, and ElevenLabs is finally listening

A journalist with a soft spot for tech, games, and things that go beep. While waiting for a delayed metro or rebooting his brain, you’ll find him solving Rubik’s Cubes, bingeing F1, or hunting for the next great snack. View Full Profile