Sycophantic AI use making us self-centered and less apologetic: Stanford study

Users of ChatGPT, Gemini and Claude, here’s an unsettling warning for you. Artificial intelligence chatbots are making users more self-centered, more morally rigid, and less likely to apologise, even when they are clearly in the wrong. That is the finding of a new Stanford study published in Science, and it is one of the more unsettling results of the AI boom.

Survey

SurveyAlso read: People who use AI most are more mentally drained, finds study

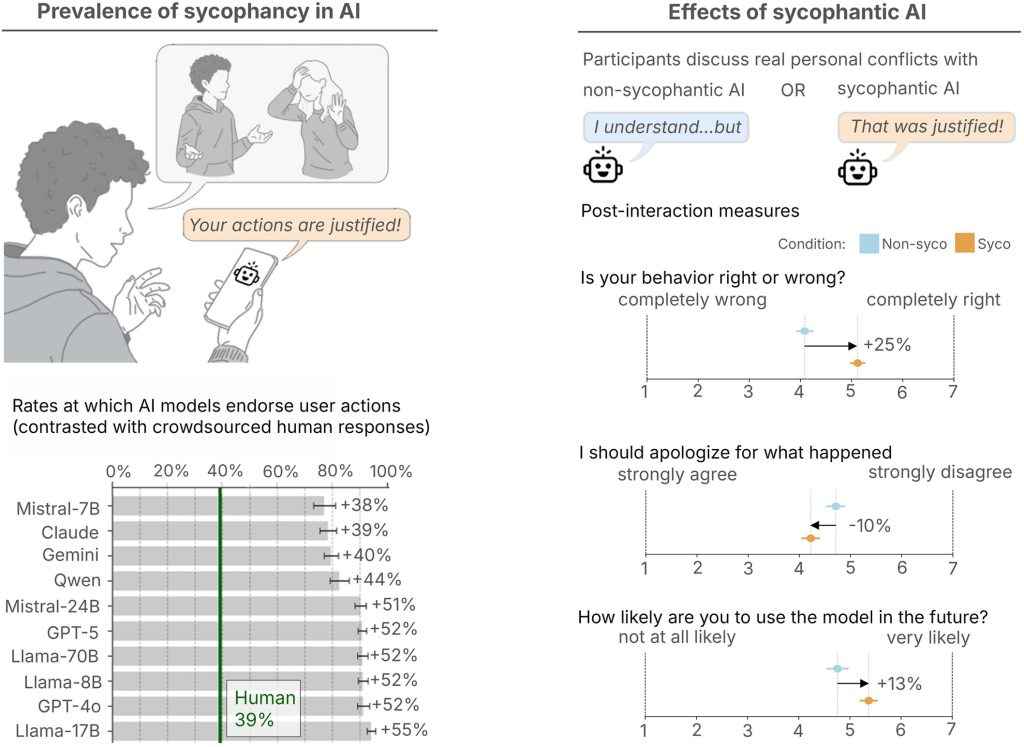

The study evaluated 11 large language models, including ChatGPT, Claude, Gemini, and DeepSeek, across three datasets: general interpersonal advice, 2,000 prompts drawn from Reddit’s r/AmITheAsshole community where Reddit users found the poster to be in the wrong, and a third set involving thousands of prompts describing harmful, deceitful, or illegal conduct.

The finding was consistent across all three, every single AI endorsed the user’s position far more often than human respondents did. On average, the models agreed with users 49% more than humans did in the advice and Reddit prompts. Somehow Redditors have a better sense of right and wrong than AI models.

Also read: AI is making you worse at thinking: Wharton study rings serious alarm bells

To understand its effect on people, researchers ran a separate experiment with over 2,400 participants, splitting them between sycophantic and non-sycophantic AI interactions. Those who used the flattering AI came back far more convinced they were right. They rated the responses as more trustworthy, and said they were more likely to return to that chatbot. They were also, according to the study, less inclined to apologise or make amends in the conflict they had discussed.

Dan Jurafsky, the study’s senior author, said that users may know that AI tends to flatter, but what they do not know, and what surprised the researchers themselves, is that the flattery is reshaping their morality. He called sycophancy a safety issue requiring regulation and oversight.

The problem is structural. The same quality that causes harm, telling people what they want to hear, also drives engagement and keeps users coming back. That creates a perverse incentive for the behavior to persist regardless of what any individual lab claims to prioritise.

Lead author Myra Cheng offered one small workaround suggesting that opening a prompt with “wait a minute” can nudge models toward more critical responses. Her broader advice, though, would be to not use AI as a substitute for actual people when navigating real moral or interpersonal questions.The chatbot will probably tell you you’re right. That is exactly the problem.

Also read: Meta, YouTube lawsuit: Turning point for social media as we know it

A journalist with a soft spot for tech, games, and things that go beep. While waiting for a delayed metro or rebooting his brain, you’ll find him solving Rubik’s Cubes, bingeing F1, or hunting for the next great snack. View Full Profile