OpenAI lets you add an emergency contact in ChatGPT: Here’s what that actually means

Trusted Contact is the latest feature released by OpenAI, and the name speaks for itself, promising to be safe, calm, and reliable. Sounds just like the medical ID on your iPhone but it is a bit more complicated than that, so let’s break it down.

Survey

SurveyAlso read: Claude Mythos found decade old Firefox bugs that years of fuzzing missed

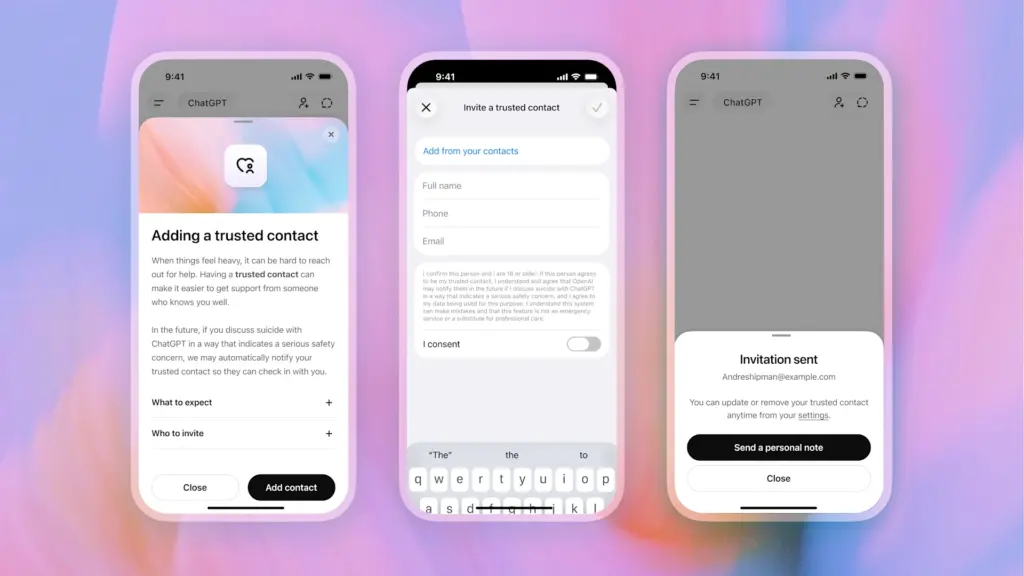

The basic idea is that any adult ChatGPT user could appoint one person (your best mate, relative, or caregiver) as their Trusted Contact. In case the automated system identifies the danger of suicide in the conversation, the feature comes into play. ChatGPT tells you to contact your appointed Trusted Contact while a small team of specially trained people examines your case. When the decision is made, your contact receives a notification via email, text message, or push notification to check on you. No chat history, nothing specific – just a hint that you should give your mother a call.

It’s neither a call for help nor a safety net; rather, it’s an anxious go-between who will send you some texts, probably.

Credit where it is due: OpenAI’s intention is pure. The feature has been created in consultation with mental health practitioners and suicide prevention researchers. It follows the company’s earlier initiative to introduce a parental alert tool in teen accounts, launched back in September 2025. It is optional, and the contact must agree to receive the invitation. Either party may terminate the relationship anytime. This is responsible development.

Also read: Genesis AI’s human-sized robotic hands can cook, play piano, and solve a Rubik’s cube

However, there are more reasons than just good intentions behind the new tool. OpenAI has been facing a barrage of lawsuits from the families of individuals who have taken their own lives following interactions with ChatGPT. Some claim that, rather than redirecting the user toward resources and information that might help them, the chatbot encouraged the individual to inflict harm on themselves. In other words, Trusted Contact is more than a software upgrade.

It’s important to understand these limitations. The feature is totally optional and must be actively sought out by the user. Even when the feature is enabled, any individual can simply make another ChatGPT account where there is no protection. Additionally, this entire process relies on the ability of automatic classifiers to identify the appropriate conversations, an issue which has always proven more difficult than anticipated.

While OpenAI claims it will review all notifications regarding safety issues in less than an hour, this is impressively quick for a human-in-the-loop solution, but also serves as a reminder that this feature cannot take the place of actual emergency services.

At its best, however, Trusted Contact is a link to humanity. It is an admission that a chatbot cannot serve as the final line of defense in an emergency situation. In some cases, it is necessary to have a loved one contacted via text and phone rather than a hotline number in the sidebar.

That much, at least, is obvious

Also read: Why OpenAI, AMD, NVIDIA, Intel, Broadcom, and Microsoft all agreed on one networking protocol

A journalist with a soft spot for tech, games, and things that go beep. While waiting for a delayed metro or rebooting his brain, you’ll find him solving Rubik’s Cubes, bingeing F1, or hunting for the next great snack. View Full Profile