Did ChatGPT assist a shooter? OpenAI now officially under criminal investigation in US

Authorities claim the chatbot may have provided information related to weapons, timing, and planning

James Uthmeier says similar human assistance could lead to criminal charges under state law

OpenAI denies wrongdoing, stating responses were based on publicly available data and did not promote harm

OpenAI is now facing a criminal investigation in the United States over the allegations that its chatbot, ChatGPT, played a role in a deadly shooting at Florida State University last year. For the unversed, this is the first time an AI company is facing a lawsuit for potential legal responsibility in connection with a violent crime.

Survey

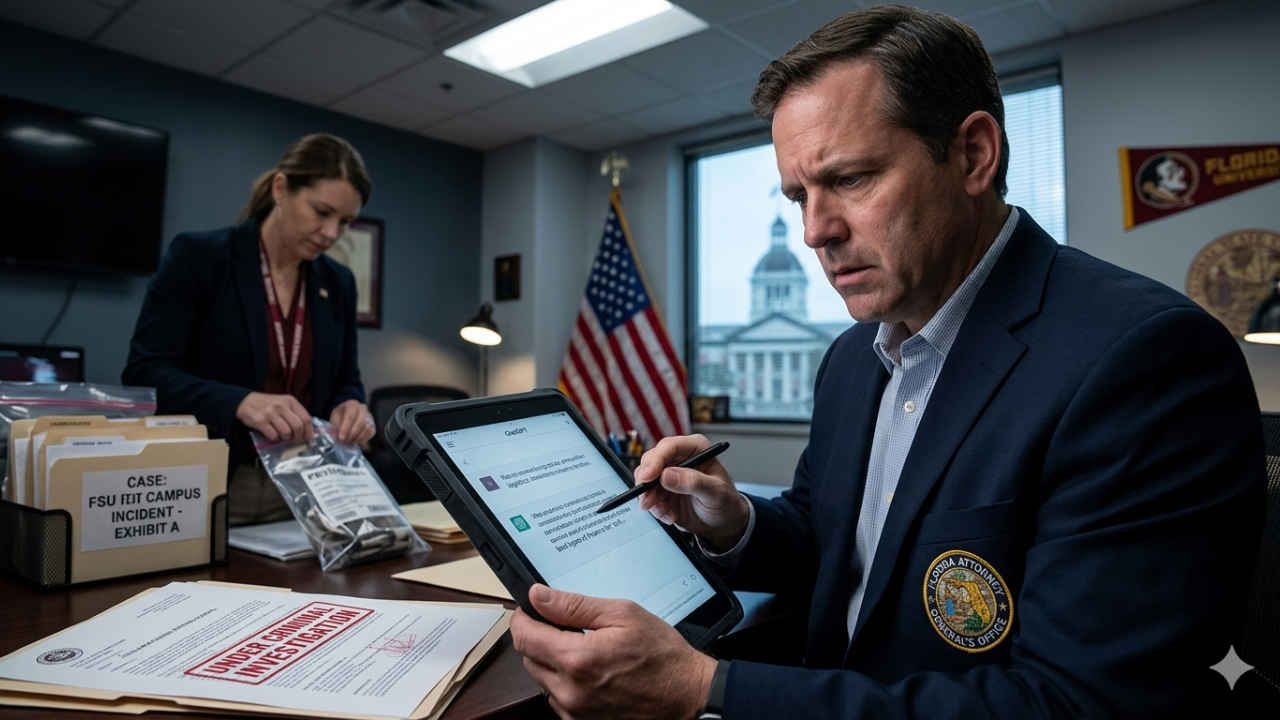

SurveyFlorida Attorney General James Uthmeier stated that his office has started an inquiry after reviewing the suspect’s alleged use of the chatbot prior to the incident. As per officials, the accused, identified as a 20-year-old student, Phoenix Ikner is believed to have interacted with ChatGPT before carrying out the attack, which resulted in multiple fatalities and injuries. He is currently in custody awaiting trial.

Authorities state that the chatbot may have provided guidance on aspects such as weapons, ammunition and timing. Uthmeier stated that if a human had offered similar assistance, they could potentially face criminal charges under Florida law for aiding or abetting a crime. However, determining liability in the case of an AI system presents a complex legal challenge.

Also read: After Tim Cook, what is next for Apple? New CEO, new products and what happens to him

OpenAI has denied any wrongdoing, stating that its chatbot did not promote or encourage harmful actions. The company added that the responses were based on publicly available information and that it had cooperated with investigators by sharing relevant account data linked to the suspect.

The case comes amid increasing scrutiny of AI platforms and their safeguards. OpenAI has previously faced legal challenges tied to alleged misuse of its technology and has said it is working on improving the safety measures. In the meantime, regulators and policymakers continue to push for stricter oversight, specifically as AI tools become more widely accessible and capable.

Ashish Singh is the Chief Copy Editor at Digit. He's been wrangling tech jargon since 2020 (Times Internet, Jagran English '22). When not policing commas, he's likely fueling his gadget habit with coffee, strategising his next virtual race, or plotting a road trip to test the latest in-car tech. He speaks fluent Geek. View Full Profile