Microsoft Copilot terms are legal ‘LOL’ for every business using it

Microsoft's Copilot terms quietly label it "entertainment purposes only"

It contradicts Microsoft's aggressive marketing of Copilot for work

Enterprises using Copilot at scale left scratching their head

Yeah, I know, none of us really spend enough time reading the terms of use of tech products we sign up to use, whether it’s hardware or software. Turns out, we should have read Copilot’s terms of use, when Microsoft last updated in October 2025. Why? Because it has language that’s frankly hilarious, if not confusing.

Survey

SurveyBefore I address the legal terms of Copilot, remember what the AI chatbot symbolises for Microsoft. It symbolises Microsoft’s first major foray into the GenAI industry, with the Redmond giant making a huge marketing push for Copilot across different products – from dedicated keyboard keys on Copilot+ PC branded laptops of the past couple of years and business software tools.

While we don’t know its exact secret sauce, we know it’s not quite wholly developed by Microsoft, and not just a rebadged version of OpenAI’s ChatGPT. Instead, Copilot is a hybrid product developed upon OpenAI’s GPT-4 and GPT-5 foundational LLMs. And its last legal terms update was made in October 2025.

Also read: Microsoft has a new Copilot boss, all you need to know

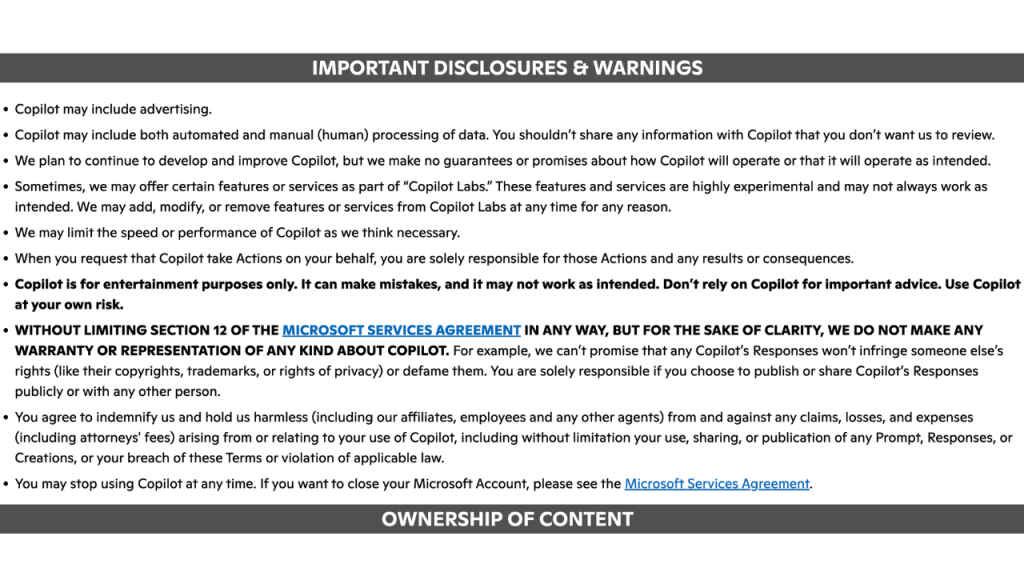

“Copilot is for entertainment purposes only,” reads Microsoft’s legal terms on Copilot use. The terms make it clear how Copilot can make mistakes from time to time, unable to work as intended. “Don’t rely on Copilot for important advice. Use Copilot at your own risk.”

These are incredible statements, even if they’re blamed on extremely risk averse legal eagles responsible for drafting them. You see, Microsoft’s built its entire GenAI messaging around Copilot since 2023. And even though its relationship with OpenAI is at its lowest point, with Microsoft developing its own native multimodal AI models since Mustafa Suleyman came onboard, still calling Copilot only good enough for “entertainment” use cases is shocking.

It’s directly at odds with everything Microsoft CEO Satya Nadella has been marketing about Copilot for the past couple of years, positioning Copilot as a transformative productivity tool that businesses can deploy to reimagine work and unlock creativity, investing billions integrating these tools across Microsoft products and services.

If Copilot is only for “entertainment”, why is Microsoft force-fitting it everywhere from Edge browser, Office productivity apps, heck even inside Windows 11, trying to nudge users into investing time and effort into something being advertised as the next big thing since sliced bread?

Who can forget Satya Nadella saying AI usage isn’t optional, reframing Copilot adoption as a cultural necessity, not just a fringe experiment for Microsoft. But if it’s only for entertainment use, and can’t be trusted for serious work, how do we square this circle?

Legal terms of use are disclaimers, I get it. But none of ChatGPT, Gemini or Claude’s legal terms categorize them as only for “entertainment use”. That’s where Microsoft Copilot starts feeling disingenuous. If Copilot is only for “entertainment” purposes, that’s Microsoft signalling it’s a colossal waste of time because it can’t be trusted for serious work. Which begs the question why even use it in the first place, right?

Also read: Copilot Cowork: AI-Powered Task Automation for Microsoft 365 Users

Executive Editor at Digit. Technology journalist since Jan 2008, with stints at Indiatimes.com and PCWorld.in. Enthusiastic dad, reluctant traveler, weekend gamer, LOTR nerd, pseudo bon vivant. View Full Profile