Claude Code’s thinking depth dropped 67%: Here’s what Anthropic actually changed and how to fix it

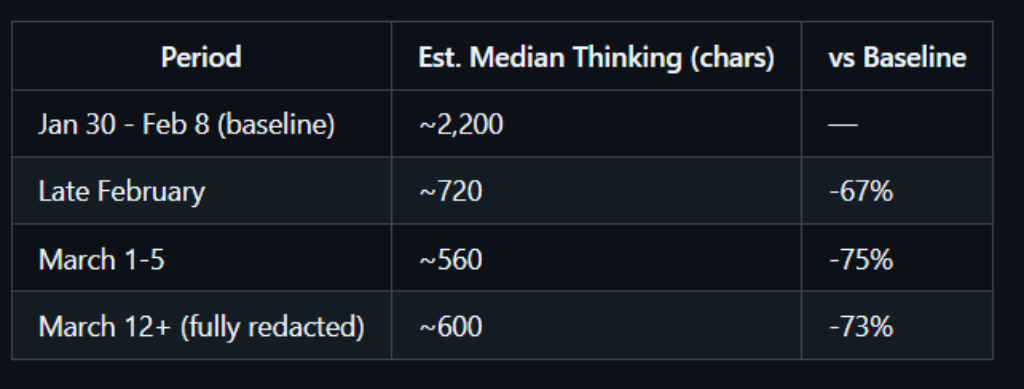

Have the past few weeks of using Claude Code made you want to switch to ChatGPT or Codex? If so, then you may be right in thinking that something inside Claude Code has changed. AMD’s Stella Laurenzo, in a GitHub post, claimed that Claude’s thinking depth had plummeted by 67% since February and she brought receipts. It is safe to say that the AMD AI head wasn’t happy with the dip in performance going so far to say that “Claude Code is unusable for complex engineering tasks.”

Survey

SurveyAlso read: Artemis II’s best picture: First ever solar eclipse from beyond the moon

SOMEONE ACTUALLY MEASURED HOW MUCH DUMBER CLAUDE GOT. THE ANSWER IS 67%.

— Om Patel (@om_patel5) April 8, 2026

the data shows Opus 4.6 is thinking 67% less than it used to.

anthropic said nothing until the numbers went public. then suddenly Boris Cherny (creator of Claude Code) shows up on the GitHub issue.

users… pic.twitter.com/P8dEQ09k81

What was actually happening

The 67% figure came from getting Claude to analyse its own session logs to measure how much it was thinking. The problem was that Anthropic had introduced a header called redact-thinking-2026-02-12 that hides Claude’s reasoning from the UI and from stored transcripts. Claude, reviewing its own logs, saw no thinking blocks and reached the conclusion that it had stopped thinking. It was essentially reading its own diary and finding blank pages, not because nothing happened, but because someone had used invisible ink.

Boris from the Claude Code team confirmed in a blog post that the header is UI-only. Thinking still happens under the hood and it was the measurement that was broken, not the model itself.

Also read: Claude Mythos Preview: Everything to know about world’s most dangerous AI model

That being said, two real changes did ship in February and March that affected behavior. First, Opus 4.6 introduced adaptive thinking where the model now decides how long to think rather than being given a fixed budget. Second, the default effort level was dropped to medium (85 out of 100) on March 3rd, framed as a “sweet spot on the intelligence-latency curve.” For users doing deep, complex engineering work, that “sweet spot” felt more like a pothole.

There’s also a real bug, and here’s how to fix it

After users shared actual conversation transcripts, Boris confirmed that adaptive thinking appears to under-allocate reasoning on specific turns. The turns where Claude hallucinated – inventing GitHub SHAs, fake package names, wrong API versions – had zero reasoning tokens emitted. The turns where it got things right had deep reasoning. For anyone looking, that has to be a pattern and hopefully Anthropic’s team is looking into it.

Until it’s resolved, you can get Claude Code performing closer to its old self by making a few small changes.

Run /effort high or /effort max in your session. Alternatively, set CLAUDE_CODE_EFFORT_LEVEL=max as a shell environment variable so every session defaults to maximum reasoning. If you want to see Claude’s thinking again, add showThinkingSummaries: true to your settings.json. And if you’re hitting the hallucination bug specifically, CLAUDE_CODE_DISABLE_ADAPTIVE_THINKING=1 forces a fixed reasoning budget instead of letting the model decide per turn. The broader frustration is legitimate. Changing a default that meaningfully degrades output for power users – without a loud warning – is a trust problem as much as a technical one. But the good news is that the team is listening, and the 67% loss in thinking could easily just be more of a misconfiguration than a lobotomy. Your Claude isn’t broken. It just needs the right settings to remember how to think.

Also read: Sam Altman investigation: 6 crazy revelations

A journalist with a soft spot for tech, games, and things that go beep. While waiting for a delayed metro or rebooting his brain, you’ll find him solving Rubik’s Cubes, bingeing F1, or hunting for the next great snack. View Full Profile