Why the smartphone will kill the point and shoot camera

A peek into the future of cellphone cameras which is spelling doom for basic point and shoots.

The idea of being able to capture real life onto a still frame was something that spurred thoughts of witchcraft. At least that’s how it was back in the dark ages, until the 1800s when innovators

like Louis Daguerre, Joseph Niépce and Thomas Wedgwood shot the first set of photographs using a “camera obscura.” Cut to some two hundred and thirteen years later, and here we are again, talking about camera tech that could, once again, only be out of something like science fiction, but that’s the beauty of life.

Survey

SurveyImagination fuels science fiction, and science fiction fuels the fascination of the select few who would love to see the imaginary get turned into the real. That pretty much has become the premise of all inventions, every fascinating gadget we see coming out these days. But let’s go back to photography here. We’ve seen some remarkable improvements in imaging technology in the last 10 years. Going from a digital camera that required a shoulder pack for power and storage to cameras that can fit into our pockets and store thousands of images at a time. We can shoot images of race cars that defy their top speeds, or shoot objects so tiny that even our eyes don’t register them, yet, there is room for more. Scientists and engineers are working round the clock to bring to your camera today, some impressive technology of tomorrow and we’re going to tell you just what they are.

The Lytro Red Hot camera

F**K The Focus

When Lytro was demoed on October 19, 2011, the world held its breath in sheer awe of what they were seeing. The camera created images which could be refocused after being shot, so that you would always have the perfect shot and not worry about missing focus. This camera also had promising implications for journalists, for whom not having to worry about critical focus would have greatly improved their chances of nailing their award winning shot. But Lytro didn’t pick up as much as everyone had thought it would simply because the final resolution of the images from the camera was just a little over one megapixel.

Cue the future, as it is being envisioned and developed by Pelican Imaging. These guys are essentially developing tech very similar to the Lytro, but unlike Lytro and other array-based or so-called plenoptic cameras, it uses a small array of traditional imaging elements. Each of these elements is sensitive only to a single color. Pelican’s unique approach provides it with one huge differentiator — its sensor module is self-contained, with no additional lens required.

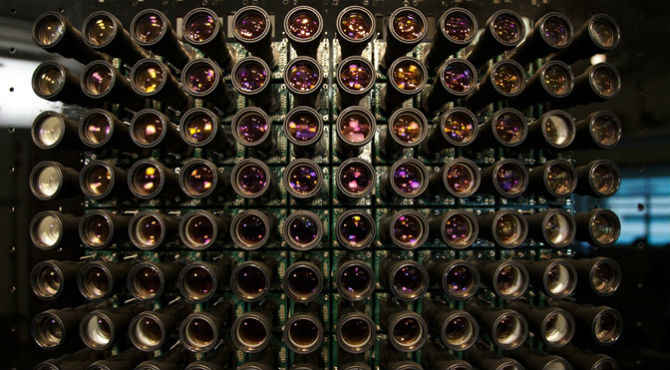

Stanford prototype Plenoptic Light Field camera array (image courtesy Extreme Tech)

What the Pelican developed sensor will be able to do is not just capture light, but also capture the direction of that light. What it also does is divide its entire imaging surface into “mini cameras”, each of which capture only one colour. The information from each individual “mini camera” is then compiled together through mathematical wizardry to yield data worth 16.7 megapixels, which finally results in an 8 megapixel image. The best part, every-thing in the image, from the foreground to the farthest object in the background will be in focus. There really won’t be a need to refocus the image after its shot, which is the best part. Now imagine this tech making its way into your camera phone. Rumour has it that Apple, HTC and even Nokia have been experimenting with the technology and could implement it into their devices in the coming years.

Super Sensors to the Rescue

Lenses may be part of the magic, but EVERYTHING rests on the sensor’s ability to capture the intended subject matter. It’s not just about the silicon, but also about how individual photosites are arranged (think Bayer pattern sensors vs. Fuji’s X-Trans sensors), how the wiring matrix is designed (think regular CMOS sensors vs. Back-Side Illuminated CMOS Sensors) and how the micro lenses filter the light coming in. Each of these properties has just recently begun to get refined, with the micro-lens array being the primary focus at the moment, with Panasonic doing some major ground breaking work in the field.

At the moment, a conventional imaging sensor employs micro lenses that only let either red, blue or green wavelength through to the respective photosite on the sensor. What this results in is the sensor getting only a fraction of the light, meaning for a particular colour to register, there must be a lot of light. Panasonic’s new “micro colour splitters” are micro lenses which are able to separate the three primary colours and redirect them to their respective photosites using diffraction. The result is extreme sensitivity to colour information even in ridiculously low light, since none of the incoming light is being wasted. While Panasonic has a working prototype of the sensor ready, they say it is currently at the laboratory stage and still a few years away from finding its way into your camera. However, we wouldn’t be surprised to see this technology becomes mainstream in the next five years.

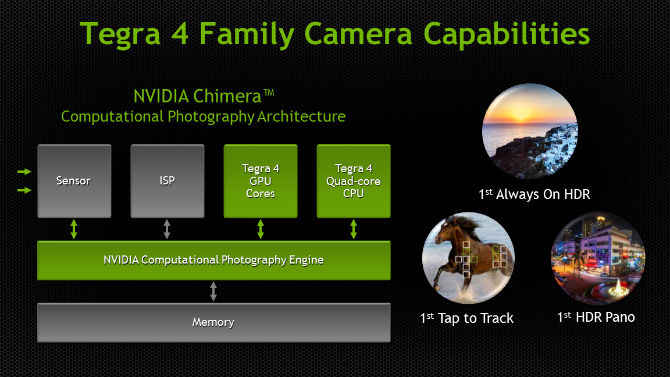

While Panasonic is working hard to make the sensor far more efficient within the existing parameters (the micro colour splitters can be manufactured using existing technology) NVIDIA is developing its own architecture known as Chimera which is expected to be a mean little sensor. Taking advantage of the Tegra 4 processor which NVIDIA hopes to infuse many of the future smartphones with, the Chimera architecture will utilize the CPU and GPU capabilities of Tegra 4 to generate real time HDR photos and videos. As of now, HDR video is only possible through the incredibly high end RED EPIC (which costs close to $20,000) and even there, it is only in experimental stages. Imagine being able to shoot HDR video AND stills simultaneously using the Chimera architecture.

Game of Optics

There’s a lot of sensor talk, but what about optics? Nokia has already revolutionized this arena with their first ever Optically Stabilized lens arrangement in the Lumia 920, but that’s just one of the many innovations. What about the ability to change lenses on your camera phone, just like in a DSLR. The idea isn’t so farfetched if you think about it. Currently, camera phones utilize a fixed lens design to ensure a slim form factor. If this was taken out of the equation, it could be possible to design the sensor assembly in such a way so as to accept different kind of lenses. It could be something as simple as a “drop-in” lens block. Companies like Olloclip are already making lenses that can be attached to the front of the camera assembly on various smartphones, and while the results are pretty neat, there is some obvious loss in quality to do a not-so-optimized optical design. Being able to just replace the stock lens with a “telephoto” block or a “fish-eye”.

.jpg)

The iPhone PRO concept by Choi jinyoung

The Endless Possibilities

As of now, we’re seeing real development on the actual sensor, with light-field architecture being one of the main points of focus, while NVIDIA is trying to beef up the processor to a point where it can do far more in one go than previously imaginable. But what if one day, the light-field guys and the NVIDIA guys sit down at some café in South of Florence and decide to let their powers combine? Imagine having a camera phone with a light-field sensor, capable of shooting HDR video and HDR stills simultaneously. The possibility of being able to swap out the stock lens on your camera phone for a special macro lens or a telephoto lens makes the prospects of cellphone photography seem so much more promising. What’s great is that we are seeing some “primitive” form of all these technologies be developed today and at the rate at which science performs miracles, it won’t be long before your cell-phone replaces your regular point and shoot for good.

Do you also think that smartphone cameras will soon overtake point-and-shoots? Tell me on Twitter @digitallphoenix or in the comments section below.