Who is Jasjeet Sekhon: CSO of DeepMind, AI career, causal inference expert

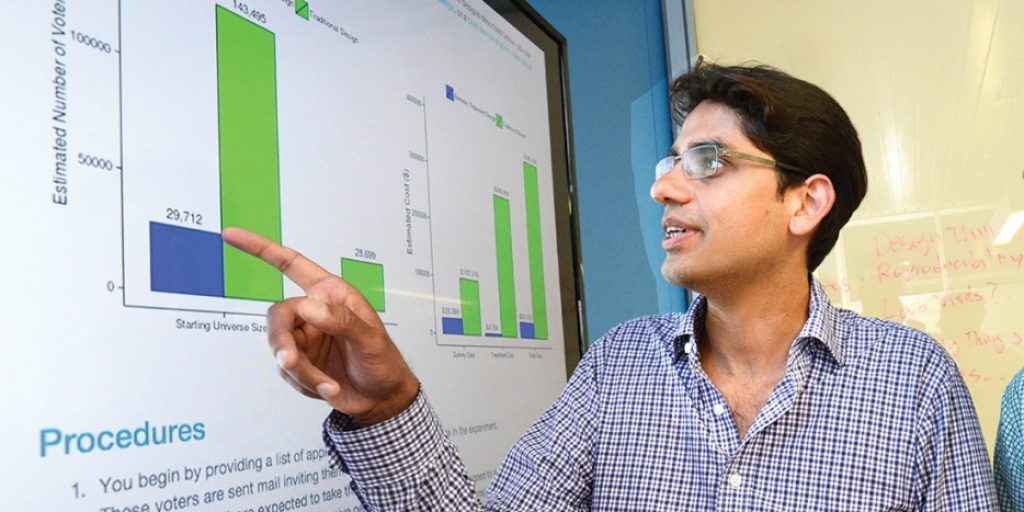

Google DeepMind has named Jasjeet “Jas” Sekhon its new Chief Strategy Officer on March 18. Sekhon is not a product executive or a Silicon Valley veteran. He is a statistician, one of the most respected in his field, whose career has moved between university departments, a hedge fund, and now the lab widely regarded as the world’s most ambitious artificial intelligence research organisation.

Survey

SurveyAlso read: Nothing Phone 4a Pro in Digit Test Labs: They flipped the script

His mandate at DeepMind is broad: research, commercialisation, and policy. In a post on LinkedIn, Sekhon said he was joining because he believed DeepMind was “the frontier lab best positioned to develop AGI safely to empower humans.” He steps down from Bridgewater Associates, where he was Chief Scientist and Head of AI, though he will remain on its board.

From academia to Wall Street

Sekhon holds the Eugene Meyer Professorship at Yale and is a fellow of the American Statistical Association. He earned his doctorate at Cornell in 1999, taught at Harvard, then spent over a decade at UC Berkeley before moving to Yale. His primary research area is causal inference, the science of determining not just what correlates with what, but what actually causes what. His Genetic Matching algorithm, which helps researchers construct more credible comparisons in observational data, remains one of his most cited works. His methods have been adopted at Amazon, Google, Microsoft, and Netflix.

Also read: Why not Gurgaon? Comic Con CEO Shefali Johnson on bringing the event to more fans

In 2018 he left academia for Bridgewater Associates, joining as Head of Machine Learning and rising to Chief Scientist. There he applied machine learning to financial signals in conditions of limited data and low noise, a problem structurally similar to the causal inference questions he had worked on in academia.

Why DeepMind?

Sekhon’s background sits at an intersection the AI field has long struggled with: the gap between systems that predict well and systems that reason about cause and effect. Most large models are pattern-matchers. They learn what tends to follow what. Causal reasoning – understanding why something happens, and whether it will hold when circumstances change – remains an open problem. DeepMind, in appointing Sekhon, is signalling where it thinks meaningful work still needs to be done.

Also read: The AI agent control problem: A rogue bot just exposed sensitive data at Meta

A journalist with a soft spot for tech, games, and things that go beep. While waiting for a delayed metro or rebooting his brain, you’ll find him solving Rubik’s Cubes, bingeing F1, or hunting for the next great snack. View Full Profile