AI content and deepfakes are spreading online: Here’s how streaming platforms are tackling them

While scrolling through YouTube Shorts, looking at a striking photo on Facebook, reading a sensational message forwarded on WhatsApp, or listening to a song on Spotify, it is increasingly difficult to tell whether the content is human-created or generated by artificial intelligence (AI). You may have experienced this moment of doubt, where something looks convincing but slightly off. The companies running today’s social media, messaging, and streaming platforms are well aware of how widespread this problem has become. Estimates suggest these low-quality, high-volume AI ‘slop’ accounts for a large portion of submissions on these platforms. For instance, market research firm Omdia projects that AI-generated uploads could exceed 50% of the total daily content volume on streaming services during 2026.

Survey

SurveyThis rise in AI-generated content is forcing platforms to rethink how they manage authenticity, copyright, and user trust. And you, the user of these apps and services, may want to know ways to spot these AI slop and deepfakes.

So, let’s examine how major platforms, including Google services such as YouTube, Meta’s social networks, Spotify, Apple Music, and Netflix, are labelling AI-generated content. We’ll also look at the technologies behind these systems and explain what they mean for creators and everyday users.

Why AI media is a problem for streaming platforms

Generative AI applications can now produce high-quality music, video, images, and voice recordings without much human input. Tools that once required professional studios can now run on consumer hardware or cloud platforms. As a result, the production of synthetic media has become easier and scalable.

For streaming platforms, this creates several problems:

- First, a viewer may not realise that a video featuring a public figure is generated or manipulated. A listener may not know that a song was produced entirely by an algorithm. The platforms may be liable if the user suffers a loss because of this confusion.

- Second, if AI-generated tracks or content are uploaded at scale, especially through automated bot networks, they can divert revenue away from human artists or creators.

- Third, regulators are beginning to intervene. The Indian government has made amendments to the 2021 IT Rules, ordering social platforms to label deepfakes and remove harmful content within 3 hrs. These rules are pushing platforms to develop sophisticated labels or formal disclosure systems.

Audio platforms face a different challenge. High-quality AI-generated music can be almost indistinguishable from human performances. This makes detection harder and increases the risk of automated streaming fraud.

Meanwhile, subscription video platforms such as Netflix and Disney operate in a controlled production environment rather than user-uploaded/generated content. As a result, AI disclosure happens mostly behind the scenes. There is no consumer-facing label/badge.

How social media and streaming platforms label AI-generated content

Video and social media platforms face the highest risks because synthetic video or deepfakes can influence elections, spread misinformation, or damage reputations.

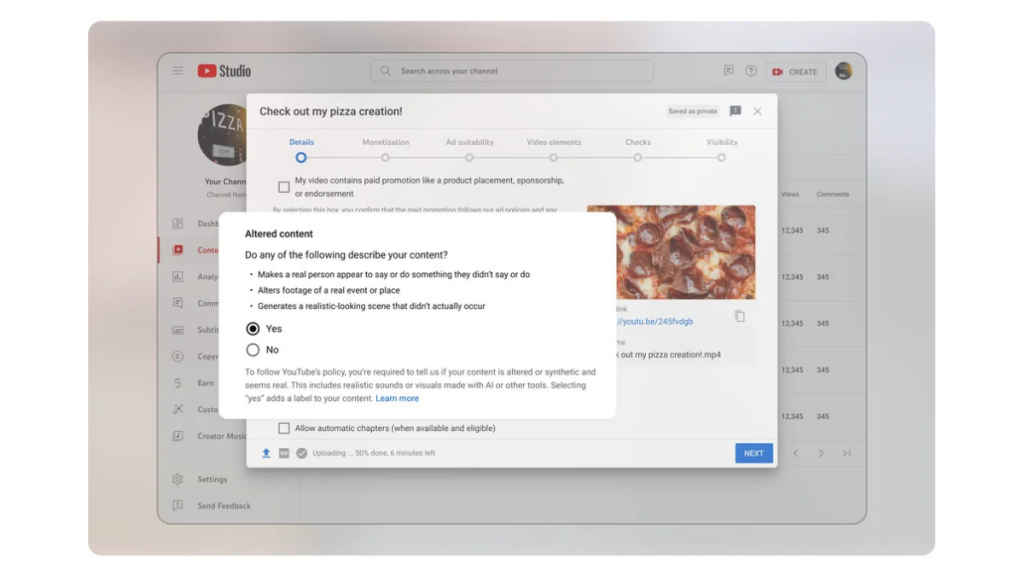

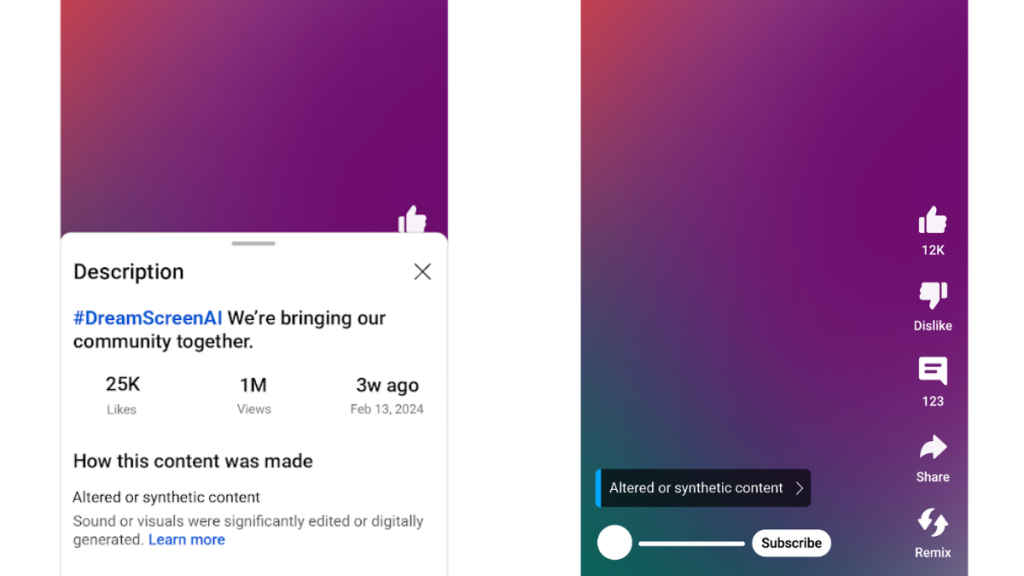

YouTube

YouTube rolled out the disclosure framework for AI-generated content in 2024. And from July 2025, it started demonetising videos that are largely AI-generated.

The system requires creators to disclose when realistic synthetic content appears in a video. Examples that require disclosure include:

- AI-generated music in a video

- voice cloning of real individuals

- synthetic footage of real locations

- videos depicting events that never occurred

Minor edits, such as colour corrections, background blur, or creative visual effects, do not require disclosure.

YouTube uses a layered label system. In most cases, AI disclosures appear inside the video description under the section ‘How this content was made’. However, if the topic involves sensitive subjects such as elections, health, or finance, the platform places a visible label directly on the video player.

The platform also uses automated detection systems to identify undisclosed synthetic media. Creators who repeatedly fail to disclose AI-generated content risk losing monetisation privileges or facing channel penalties.

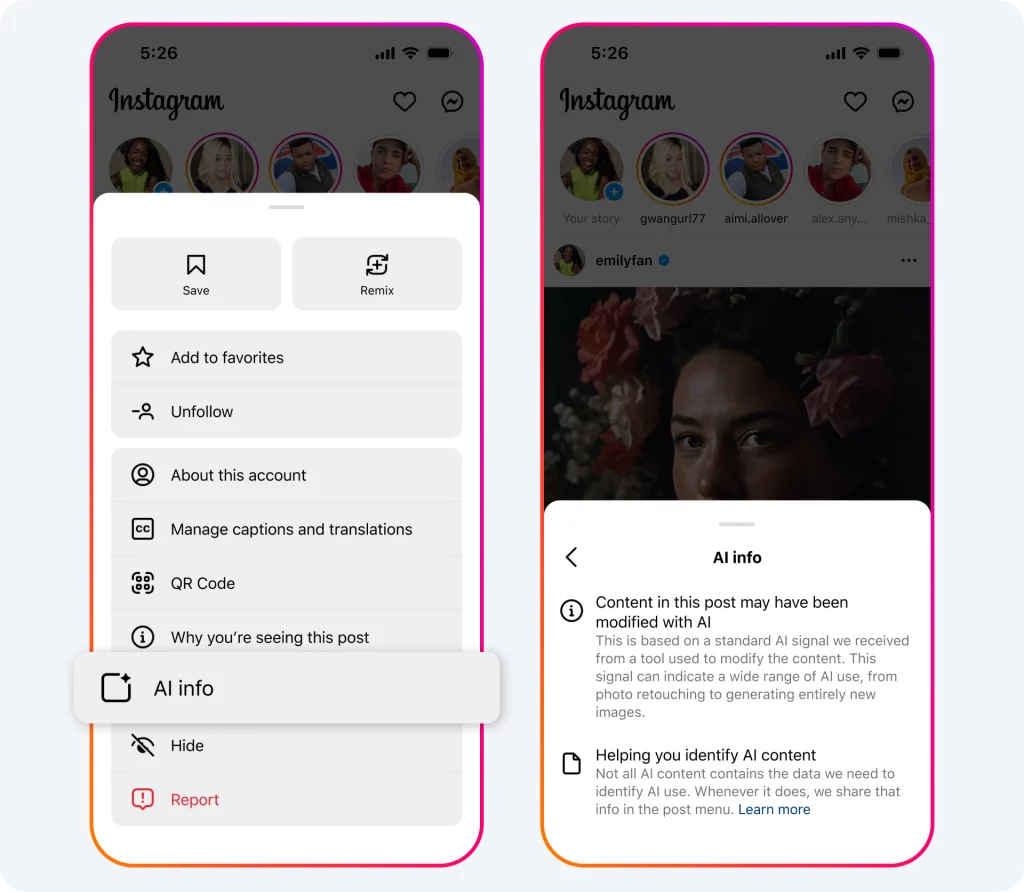

Meta platforms

Meta, which operates Facebook, Instagram, and Threads, replaced its AI label from ‘Made with AI’ to ‘AI info’. The visibility of the label depends on how much AI is used in the content.

If content is fully generated, the label appears prominently on the post. If AI tools were used only for editing or enhancement, the label may appear in the post’s information menu instead of the main interface.

Apple Music

Apple Music introduced a metadata system called ‘Transparency Tags’. These tags allow labels and distributors to declare whether AI was used during the creation of music.

The tags can indicate AI use if it’s found in ‘a material portion of a sound recording’:

- album artwork

- the sound recording or the track itself

- lyrics or musical composition

- associated music videos

However, the system relies entirely on voluntary disclosure by distributors and labels. If a label does not apply the tags, Apple assumes the track is not AI-generated.

Spotify

Spotify has chosen a different strategy. Rather than banning AI-generated music outright, the platform focuses on metadata transparency and fraud prevention.

Spotify uses the DDEX industry standard to collect detailed metadata about how AI tools were used in AI-generated vocals, instrumentation, or post-production. This allows the platform to distinguish between tracks created entirely by algorithms and songs where AI assisted in mastering or production.

At the same time, Spotify has introduced spam detection systems designed to identify mass-uploaded synthetic tracks, uploading tracks under another artist’s profile, and bot-driven streaming activity.

Netflix

Netflix requires production partners to disclose the use of generative AI during the filmmaking process.

Certain uses require additional approval, including:

- digital replication of actors without their permission

- synthetic voice generation

- AI-generated visual characters

Disney

Disney has taken a different route. It accepts using AI for supporting creative storytelling and content production, improving guest and customer experiences, and increasing operational efficiency and employee productivity.

It also licenses some of its animated intellectual property to OpenAI’s Sora. These tools allow fans to create short videos featuring certain characters, but they exclude human actor likenesses.

The technology behind AI disclosure

Two major technologies underpin most AI disclosure systems across the industry:

Content credentials

The Coalition for Content Provenance and Authenticity developed the C2PA standard. This system embeds metadata directly into digital files, documenting their creation history.

The C2PA metadata records:

- Who created the content

- Which tools were used

- When modifications occurred

Leading tech companies like Google, Microsoft, Meta, Adobe, Samsung, Qualcomm, Intel, Arm, Sony, Canon, Leica, and Cloudflare have integrated C2PA into their products.

These platforms can verify the integrity of this data by comparing its original cryptographic signature embedded at the time of creation to the current signature. If the file is altered, the verification system detects the change.

Invisible watermarking

Metadata alone is not enough because it can be removed during file conversion. To address this, companies are developing invisible watermarking technologies.

These watermarking signals are directly embedded into the content itself. For example:

- Images and video may contain pixel-level watermarks like Google’s SynthID

- Audio files may contain noise watermarks. Spotify uses this.

- Text models like ChatGPT and Gemini are known to use nearly invisible space characters or tweak the word probabilities subtly to encode patterns.

These signals allow platforms to detect AI-generated content even if the metadata has been stripped.

Also Read: India AI Impact Summit 2026: BharatGen Param 2, SarvamAI, and the rise of Indian LLM models so far

What this means for creators and audiences

The introduction of AI disclosure systems has broader implications for the digital economy:

For creators, transparency labels may affect discoverability. Some platforms already reduce the algorithmic promotion of fully synthetic content. Even where there is no explicit penalty, audiences may prefer human-created media.

For users, labels help in understanding whether AI played a role in its creation, and they can accordingly decide whether to trust or rely on the content or not.

How to spot AI-generated content on streaming platforms

Even with these labels and disclosure systems in place, you may still encounter an increase in AI-generated media in your feeds. Sometimes, there won’t be any visible label either. So, here are some ways you can identify synthetic media:

- Look for platform disclosure labels like ‘How this content was made’ on YouTube descriptions or ‘AI Info’ indicator on Meta posts.

- Check the creator profile and upload patterns to see if something is odd or uncanny.

- Watch for unnatural audio or visuals. In images and videos, look at lip movements, finger movements, facial expressions, lighting or inconsistent background objects. In audio, synthetic songs may show overly uniform vocals with little emotional variation, repetitive melodies and chord structures, or extreme shortness of the track.

- Use online AI checkers for text

- A general cautiousness with impersonations and deepfakes

In any case, the rapid rise of generative AI means this kind of content is likely here to stay. So when you come across something online, it helps to pause and look at it a little more carefully using the checks mentioned earlier. Platforms also need to play their part by adding clearer disclosure labels and taking action against creators who misuse AI to mislead audiences. As detection tools and industry standards improve over time, it should become easier for all of us to navigate the growing mix of real and AI-generated content online.

Keep reading Digit.in for similar stories.

Also Read: India AI Impact Summit 2026: Top robots showcased at Bharat Mandapam

G. S. Vasan

G.S. Vasan is the chief copy editor at Digit, where he leads coverage of TVs and audio. His work spans reviews, news, features, and maintaining key content pages. Before joining Digit, he worked with publications like Smartprix and 91mobiles, bringing over six years of experience in tech journalism. His articles reflect both his expertise and passion for technology. View Full Profile