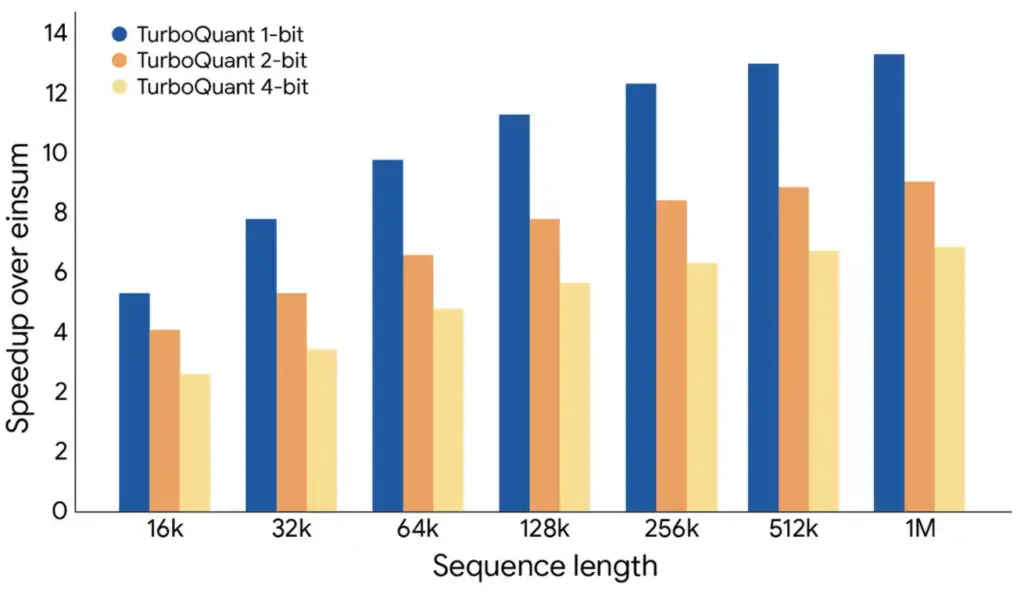

Google’s TurboQuant may drive more memory demand not less, analysts say

It doesn’t take a genius to figure out that making memory for AI datacenters is way more profitable than making it for your gaming rig and that most of these big companies are not coming back to the consumer market. However, Google announcing TurboQuant and causing Micron’s stocks to fall and then RAM prices going down ever so slightly gave us that slight sliver of hope. That hope may be short-lived.

Survey

SurveyAlso read: Why are RAM prices falling, and is it the best time to upgrade?

When Google Research published its TurboQuant blog post in late March, the reaction was swift and visceral. SK Hynix lost 7.3% of its market value within 48 hours. Samsung fell sharply. Cloudflare’s CEO called it Google’s “DeepSeek moment.” The narrative wrote itself, an algorithm that compresses AI memory usage sixfold must surely be bad news for the companies selling that memory.

Except analysts aren’t so sure. In fact, many think the market got this completely backwards. Chae Min-suk of Korea Investment & Securities said in a report that the sell-off stemmed from “an interpretation error caused by confusing the roles of memory capacity and memory bandwidth.” The argument the bulls are making hinges on the Jevons Paradox, a 19th century economic observation that when a resource becomes more efficient to use, total consumption tends to go up because efficiency makes it viable in far more contexts. When DeepSeek launched dramatically more efficient inference in early 2025, the same fear spread, and high bandwidth memory (HBM) demand climbed anyway. The market has now seen this exact movie twice, but panicked both times.

Also read: Google’s TurboQuant explained: The JPEG approach to AI compression

The technical reality supports this reading. TurboQuant only addresses inference memory – specifically the KV cache. Training a model requires fundamentally different memory driven by activations, gradients, and optimizer states. TurboQuant has zero effect on any of that.

There’s also the matter of actual order books. Micron’s CEO stated plainly that the company’s entire 2026 HBM supply is already sold out, not a company facing a demand destruction event. Meanwhile, Ray Wang of SemiAnalysis said “the market has largely misread TurboQuant,” adding that “increasing memory demand will be required for both training and inference as AI models evolve.”

For consumers hoping TurboQuant would be their ticket back to affordable RAM, the picture is bleak. The structural shift toward AI datacenter memory was never going to be reversed by a compression algorithm. If anything, cheaper inference means more applications become economically viable, which means more infrastructure, which means more chips. The cruelest irony of TurboQuant may just be that the very efficiency it promises could end up making the memory boom bigger, not smaller.

Also read: AI boom causing global memory crisis, warn Dell, HP and Lenovo

A journalist with a soft spot for tech, games, and things that go beep. While waiting for a delayed metro or rebooting his brain, you’ll find him solving Rubik’s Cubes, bingeing F1, or hunting for the next great snack. View Full Profile