Apple updates Platform Security Guide for iPhone, iPad, Macs and more

Apple Platform Security Guide is now live.

Apple's approach is heavily based on hardware.

Apple has today updated its Platform Security Guide, a document that has only grown over the last decade. Currently, the guide sits at over 200 pages and outlines every security measure that Apple implements across its set of devices and operating systems, including iOS, iPadOS and even WatchOS. Pouring through the document, there are some very interesting bits that stand out. Here are some of them.

Survey

SurveyHardware-First Approach

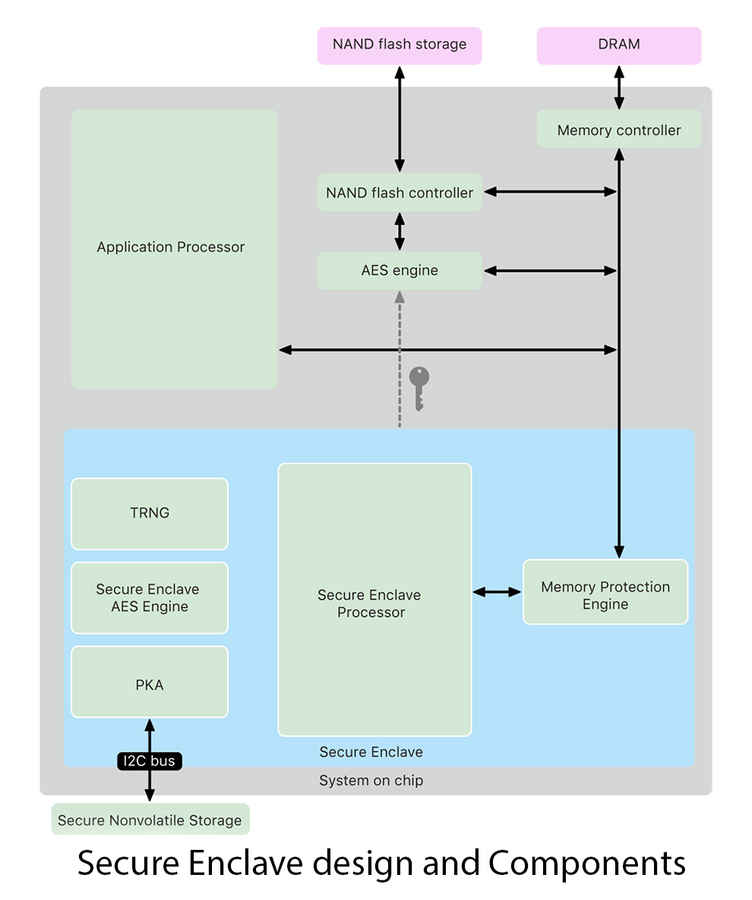

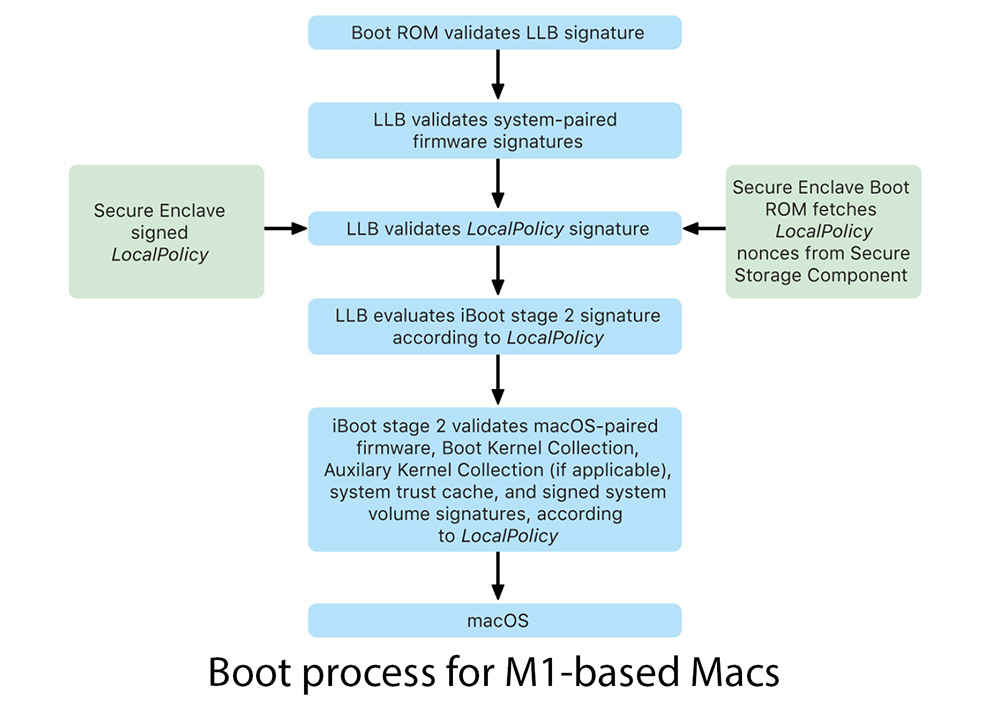

The Apple Platform Security Guide says that “For software to be secure, it must rest on hardware that has security built in.” While most of us might look at an Apple SoC, say, like the A14 Bionic and talk mostly about its performance, there are so many components built into that chip that is just for security. Apple’s approach to securing devices starts at the silicon level, with something called the Secure Enclave. This is a part of the SoC for both the mobile and M1 powered MacBook Pro and MacBook Air. The Secure Enclave follows the same design principles as the SoC does—a boot ROM to establish a hardware root of trust, an AES engine for efficient and secure cryptographic operations, and protected memory. The information stored within the Secure Enclave is processed using a dedicated Secure Enclave processor, so as to prevent any side-channel attacks by malware which usually rely on the software sharing the same execution cores as the target software.

The Secure Enclave operates out of a dedicated portion of the DRAM, and Apple has built a number of methods of keeping this area secure. For starters, every time your device boots up, the Secure Enclave Boot ROM generates a random memory protection key for the Memory Protection Engine. This key is only valid till the next reboot, making it harder to crack. This is also why you need to enter your password when you reboot your Apple device because your passcode is used to generate this random key. Encrypting the memory block where Secure Enclave resides isn’t enough. Apple further builds in fail-safes such as disabling access to this part of the memory entirely after a number of incorrect matches between the authentication tag and the encryption key until you reboot the system again.

In order to secure the random cryptographic keys being generated, Apple fuses the SoC with a randomly generated unique ID (UID). This is then paired with a Tue Random Number Generator, which generates the cryptographic keys that are used to secure the data on your device. Given that the root cryptographic keys are assigned to an SoC, it, therefore, makes sense why a third-party repair to your storage or fingerprint sensor would disable that device. The guide says, “The UID allows data to be cryptographically tied to a particular device. For example, the key hierarchy protecting the file system includes the UID, so if the internal SSD storage is physically moved from one device to another, the files are inaccessible.” This explains why third-party repairs of Touch-ID sensors or even repairs on Macs equipped with the T2 chip would result in the device not working, or being disabled altogether.

Bringing it all Together: TouchID and FaceID

The process of biometric authentication for Apple began when the company realized that an average person would unlock their phone up to 80 times a day. This meant that people were not using a passcode on their devices, because who would want to type in a four (or more) digit code that many times. This meant that the robust security measures built into Apple devices, especially the iPhone, was mostly going unused. This propelled the company to work on TouchID, a method that was not only convenient but also secure. Both TouchID and FaceID work on a rather elegant principle. They are both simply a mathematical representation of your fingerprint or face, but the way in which that information is kept up-to-date is incredible. For example, Apple has used millions upon millions of images to train its neural engine to work on faces wearing glasses, contact lenses, sunglasses, hats etc.

The other thing to consider is the fact that storage on M1 Macs, iPhones, iPads and even the Apple Watch (Review) is encrypted by default. Every time you shut the device down, the storage is encrypted to prevent unauthorized access. When you reboot your phone, the process of decrypting is far more complex, with the phone’s security systems going to multiple layers of encryption, decryption and authentication before bringing you to the log-in screen. What’s truly astounding about all this is the speed with which all this occurs. A few years ago, encrypting and decrypting a 256GBGB drive would take a significant amount of processing power and time. Now, it happens in nearly an instant on your Apple device, driving the point home that Apple prioritises security and ease of use in equal measures.

Apple’s commitment to security doesn’t just stop at securing the boot process. The company takes active measures to provide similar levels of security within its operating system environments as well. A good example of this is BlastDoor, a completely new sub-system that isolates the contents of incoming iMessage's from the OS, parses the contents, removing any malicious code and finally only passing out the safe content. Basically, if someone tried to send you a malware attack over iMessage, BlastDoor would prevent that from doing any damage to iOS. We’ve actually done a detailed look at how BlastDoor works, which you can read here.

Security goes Wireless

Perhaps one of the most exciting features that Apple users are going to be getting in the next few weeks is the ability to unlock their iPhones using the watch. The feature would go live when Apple pushes out iOS 14.5 and WatchOS 7.4 for the iPhone and Apple Watch. Since the pandemic, masks have become a staple for all of us, rendering FaceID practically unusable. This is Apple’s solution to the problem, at least for those who have both an iPhone and an Apple Watch. The way this feature will work is that once FaceID detects you are wearing a face mask, it will automatically use the proximity of your watch to unlock the phone. While this might seem like an easy way for people to pry into your personal business, Apple has taken a number of steps to minimize abuse. Firstly, you will need to have a passcode on the Apple Watch, and the watch must be unlocked when you’re trying to unlock your phone. Second, every time the phone is unlocked using this method, you will get haptic feedback on your wrist letting you know the phone has been unlocked using the Apple Watch. But perhaps the most important layer of security is in the form of being able to lock your phone using the watch. Securing your phone from prying eyes isn’t the only steps Apple takes to prevent unauthorized access. For anyone wondering how secure this communication between the Watch and the iPhone would be, well, there’s not much to worry about.

When an Apple Watch and an iPhone are paired together, the connection is secure and encrypted. Now in order to unlock the iPhone, the Apple Watch must be sending it some sort of message to do so. This “message” is generated by the respective devices’ own secure enclaves and even if those packets were to be intercepted, deciphering them is next to impossible. Now in the unlikely scenario that someone does manage to decrypt these unlock packets, remember, every time you reboot your phone or watch, a new key is generated, rendering the previous ones useless. If Apple were to add TouchID to the Apple Watch’s Digital Crown, the unlock process would become even smoother, since users won’t have to enter a passcode to unlock their watches first.

While Apple has published its security guide every year for the last decade, this is the first time the company has gone into such depth to explain the end-to-end process of securing your devices. This also illustrates why security is far tighter on Apple devices, in comparison to say Android smartphones or Windows Laptops. Apple’s control over the entire hardware and software pipeline is the only reason for this level of security.

Swapnil was Digit's resident camera nerd, (un)official product photographer and the Reviews Editor. Swapnil has moved-on to newer challenges. For any communication related to his stories, please mail us using the email id given here. View Full Profile