Prith Banerjee and Jamie J. Gooch from Ansys talk about daring to dream of Simulation’s Reality in 2041

We're celebrating our 20th birthday this month, and we've invited industry experts, researchers and scientists to write in and paint a vision of the future, 20 years from now. Here's what Prith Banerjee, Chief Technology Officer, Ansys and Jamie J. Gooch, Corporate Content Manager, Ansys had to share about their vision of the future.

Survey

SurveyIt’s 2041. Asking your phone to send an autonomous electric vehicle to pick you up for work is common. You still go to work on occasion, but more so for the benefits of face-to-face social interaction than any real need to be in the office. Your home office can access the cloud’s essentially unlimited computing power and capacity via 8G, of course, but interacting with holograms of your co-workers and colleagues still isn’t quite the same as seeing them in person.

Besides, it’s your boss’s birthday today. There will be cake.

That reminds you: You wanted to simulate the effect of a significant glucose spike on the artificial pancreas your team is designing. As your AV smoothly navigates the city streets, you call up your simulation software’s AI assistant, which greets you by name. You tell it what you want to do. It asks you a few questions to nail down the requirements and informs you that the new simulation results will be ready before you even get to the office.

“Would you like to 3D print new models using the 10 most promising altered-cell scaffold materials?” it asks.

No need for that yet; the interactive 3D simulations will tell you all you need to know.

Maybe that scenario sounds impossible, but at Ansys we have been singularly focused on helping customers achieve the seemingly impossible for more than 50 years. We’ve seen what our customers have been able to create with our simulation software, and we know the current incredible rate of innovation today will seem painfully slow two decades from now.

Twenty years ago, when Digit first began publishing, we were all impressed by a mobile phone that had a tiny color screen. We marveled at the first self-contained artificial heart implant. In the simulation world, Ansys 6.0 made large-scale modeling practical for the first time. Today, we can create 3D scans using lidar sensors in our phones, thousands of artificial hearts are implanted each year and the definition of a “large-scale” model has expanded to include entire systems.

Augmented reality will help us visualize what we cannot see with the naked eye, such as electromagnetic interference.

Twenty years from now, as foundational technologies like artificial intelligence/machine learning (AI/ML), high-performance computing (HPC) and augmented reality (AR) simultaneously make huge leaps forward, simulation software will build upon those innovations with complete solutions in different verticals. The ability to simulate what will happen and optimize products, systems and processes before a physical prototype is built will enable us to realize the full promise of today’s potentially world-changing innovations: digital twins, personalized medicine, autonomous vehicles, Industry 4.0, smart cities and more.

Simulation Simplified

To understand the future of simulation, you must first understand how simulation software works today. At a very high level: Simulation enables you to predict what will happen to something if it is placed in a given environment under a certain set of circumstances. That “something” could be a soda can being dropped from a shopping cart, a cell phone signal interfering with electronics in a hospital room, or a printed circuit board (PCB) expanding because of the heat of an integrated circuit.

The world around us is governed by the laws of physics, which are modeled by second-order partial differential equations, such as the Navier-Stokes equations for fluids or Maxwell’s equations for electromagnetics. We simulate the physics numerically using methods such as finite element analysis (FEA) used in our Ansys Mechanical solver or finite volume methods used in our Ansys Fluent fluid dynamics solver. These numerical solvers use well-known mathematical principles on well-known shapes – such as squares or triangles – that are overlaid as a mesh on a model of what’s being simulated. The mesh is created by the simulation software, which uses it to calculate how the individual cells will react to outside stresses like heat, pressure or electromagnetic interference, and how they will interact with one another. When combined in a simulation run, those reactions and interactions of cells in the mesh enable the simulation software to predict whether that falling soda can will burst on impact, how to minimize electromagnetic interference, how to best cool a PCB to prevent cracking, or just about anything else you can imagine.

Simulation is a tested means of improving existing products because it enables engineers to explore “what-if” questions without going to the time and expense of creating a physical prototype for every scenario. Simulation is also used to help engineers understand the best way to design something completely new.

Simulating impacts, electromagnetic interference, fluid flows and more is common today, as is relying on simulation to suggest optimized design alternatives. However, simulations can get much more complex. They can take into account the effects of multiple physics simultaneously over distinct domains, or in large assemblies and systems, or when scaling from the microscopic to the mission level. These complex simulations require significant compute power to solve in a reasonable time if maximum accuracy is required.

Humans aren’t immune to the effects of ballooning product design and development complexity, either. Knowing how to set up, run and modify advanced simulations requires significant expertise. The more complex the simulation, the more expertise is required. This leaves a relatively small pool of simulation experts, which impedes the ubiquity of simulation.

Simulation Without Compromise

Technologies already under development promise to simplify and democratize the use of simulation by allowing more people to solve more multiphysics, multi-domain, multi-scale problems with:

- High speed

- High accuracy

- Easy-to-use workflows

- Robustness to ensure repeatable results

Unfortunately, there are tradeoffs involved with these four pillars. For a given problem, you can make the simulation run faster by making a mesh with fewer points, but then you lose accuracy. You can have higher accuracy with 10X more mesh points, but then the simulation runtimes increase significantly.

Using HPC and AI/ML, Ansys has made our simulation tools run more quickly, more accurately and more robustly while making them easier to use. Still, many of the tools need hundreds of parameters to be set by an advanced engineer so that the solver runs in the best possible manner. In the future, we will have AI/ML automatically learn to set these parameters. We can imagine a future state where a designer will give voice instructions using natural language, enabling the AI/ML to automatically set the parameters of the solvers and run simulations for all possible design choices to provide the best possible product choice.

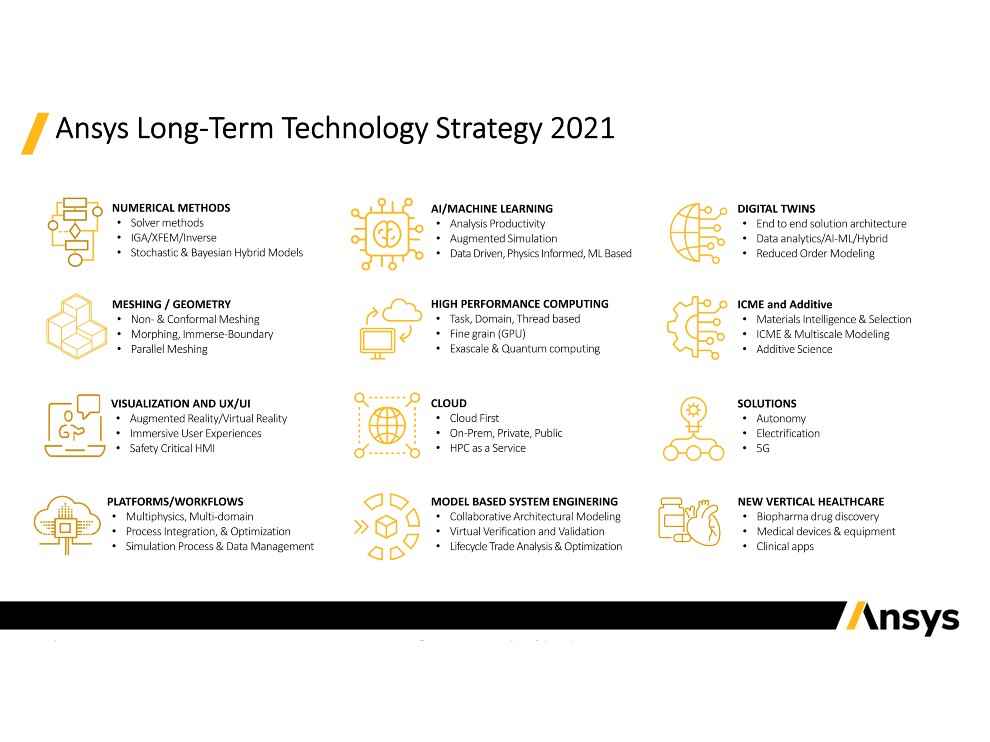

Our long-term technology strategy shown in the figure below reveals how we are planning to solve this problem for our customers over the next 20 years.

By 2041, computers won’t simply continue to march to the productivity increases inherent in Moore’s Law. Simulation software will take advantage of the speed increases that exascale and quantum computing bring to the entire hardware stack, from processors to memory to interconnects. Engineers won’t need to choose between speed and accuracy. Many simulations will solve in near real-time, enabling on-the-fly exploration of design modifications. The increased computing power will also provide answers to complex problems that would be impractical to fully simulate today.

Computing innovations will spin off speed boosts at all levels: cloud computing, on-premises HPC and the edge where connected devices will collect data that will be used to inform digital twins and help engineers optimize the next generation of products. All of that data and more will be needed to train AI algorithms that will fundamentally alter simulation software via increased automation and AR that will make software easier to use.

In addition to enabling the practical use of finer meshes for greater accuracy, new mesh innovations will flourish in such an environment. For example, isogeometric analysis meshing will natively bring FEA into conventional computer-aided design (CAD) tools to allow responsive simulation for complex models.

Smart cities will rely on simulation for everything from setting land and air traffic patterns to predicting energy use.

As software gets smarter and more responsive thanks to more advanced HPC and AI, it will also visually guide experts and novices alike through simulation setup, optimization and solving. This will democratize simulation throughout product design and development and beyond, giving novices the confidence to use simulation like they use PowerPoint today. Interacting with AR-enabled digital twins will be the status quo for virtual testing.

Simulation from a Grand to Granular Scale

The increased speed, accuracy and ease of use will enable model-based system engineering (MBSE) to expand its foothold in many verticals. MBSE supports system requirements from the earliest conceptual stage to connect people, hardware and software design processes and workflows at the system level, rather than treating them as individual parts. MBSE will support large-scale digital mission engineering and end-to-end engineering lifecycle management that will have far-reaching effects on design for manufacturing, sustainable material choices and knowledge transfer.

In 2041 simulation will be faster, accurate and ubiquitous. It will extend beyond parts, assemblies and systems to encompass multiphysics across multiple domains. While the fulfillment of those predictions alone has world-altering repercussions, it seems a bit safe. Besides, what will all the simulation experts be doing if novices and AI are running simulations? They’ll bypass what AI can reveal based on existing product data and focus on the atoms that make up the molecules that make up the products.

Think about it: Someone has characterized materials and found Young’s modulus so that we can simulate the effects of stress and strain. But inside those materials are atoms or molecules. If we could go there, we could simulate without limitation. Truly optimized creations – based on the best materials at a molecular level – would spring forth regardless of our preconceived notions about what a product should look like. It would look like what it should be.

For example: Instead of designing a CAD model and performing structural analysis to simulate it to see if a soda can design works, we would specify – via natural language instead of a CAD user interface – that we need a container to hold 12 ounces of X and then the software would generatively design and simulate options at a multi-scale level, down to effervescence of the soda.

Now imagine if the soda can was a life-extending pharmaceutical and the requirements were based on an individual patient’s biological vitals. The result would be the perfect pill, additively manufactured on-demand for each patient.

Just imagine the possibilities, then simulate them and then create them. Will we be there in 20 years? With simulation, all things are possible.

– By Prith Banerjee, Chief Technology Officer, Ansys;

and Jamie J. Gooch, Corporate Content Manager, Ansys

To read what other industry leaders and experts have to say about the future in their respective fields, visit our 20th Anniversary Microsite.

Contributions from industry leaders and visionaries on trends, disruptions and advancements that they predict for the future View Full Profile