After Anthropic controversy, OpenAI revises Pentagon deal terms, plans to add stronger anti-surveillance clauses: All details

OpenAI said it rushed its Pentagon deal and will add stronger anti-surveillance safeguards.

Anthropic’s refusal over unclear terms sparked backlash and the Cancel ChatGPT trend.

The row raised wider concerns about AI, government contracts, and civil liberties.

Sam Altman OpenAI

Sam Altman now understands why Anthropic wanted the terms and conditions of its deal with the United States Department of Justice to be carefully shaped. What began as a disagreement over how government agencies could use advanced AI tools quickly turned into a larger debate about civil liberties, military power and corporate responsibility. Recently, Altman posted on X about the agreement with the Pentagon, stating that the OpenAI deal with the government had been rushed. Observers noted this shift in tone after significant user backlash. As reported earlier, many users also protested against the deal between OpenAI and the Pentagon as they deleted the AI tool during the Cancel ChatGPT trend.

The issue became bigger when US Defence Secretary Pete Hegseth called Anthropic a ‘supply-chain risk’. Around the same time, OpenAI made its own agreement with the United States Department of Defence. This situation has raised new questions about how AI companies handle business deals with the government, especially when the work is sensitive and raises ethical concerns.

Also read: Anthropic’s Claude now lets you transfer AI chat histories from rivals, here’s how

Anthropic was started in 2021 by former OpenAI researchers, including Dario Amodei. The company presents itself as a safer and more careful option in the AI industry. Recently, it has also responded to the Pentagon’s requests by saying its technology should be used only for lawful purposes. Anthropic said it needed clear terms to ensure its systems would not be used for domestic surveillance of Americans or for autonomous lethal weapons.

Also read: Microsoft employee takes parents on office tour, calls it dream come true

The Defence Department disagreed, arguing that a private contractor could not decide how its tools would be used in national security work. When the two sides failed to reach an agreement by a Friday deadline, Hegseth publicly declared Anthropic a ‘supply-chain risk to national security’, a move that could block it from government contracts.

At the same time, OpenAI was holding its own talks with the Pentagon. Unlike Anthropic, OpenAI agreed that its technology could be used for all lawful purposes but negotiated safeguards. The Pentagon also allowed some OpenAI employees to work alongside government staff on classified projects to help ensure system safety.

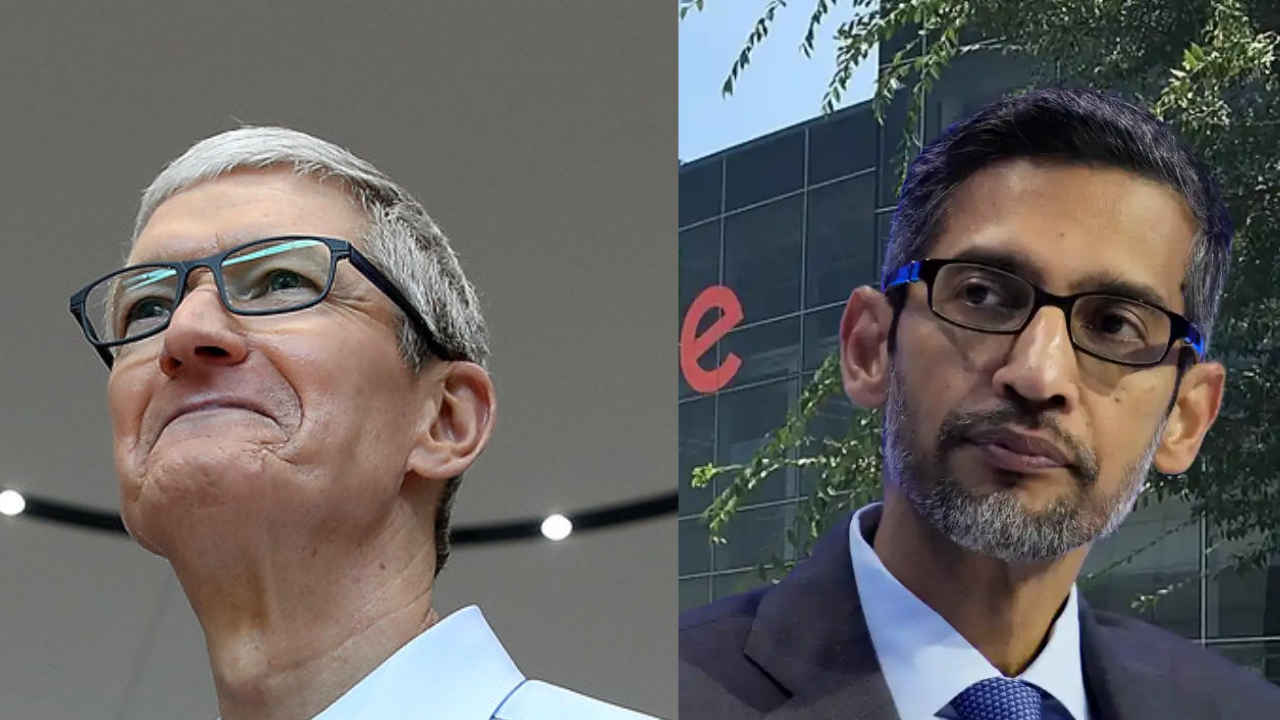

Also read: Apple asks Google to host new AI-powered Siri on its servers: Report

The deal triggered backlash online, with reports of users uninstalling ChatGPT in protest. In response, Sam Altman posted a message on X addressing the controversy. ‘We shouldn’t have rushed to get this out on Friday. The issues are super complex and demand clear communication,’ he wrote. He added, ‘We were genuinely trying to de-escalate things and avoid a much worse outcome, but I think it just looked opportunistic and sloppy. ‘ Good learning experience for me as we face higher-stakes decisions in the future.’

Altman also said it was ‘critical to protect the civil liberties of Americans’ and noted that the Pentagon had assured OpenAI its tools would not be used for domestic surveillance. He further said Anthropic should not be designated a supply chain risk and that he hoped the Defence Department would offer it the same terms.